文章目录

今天我们来介绍一个如何基于青云VPN网络来构建一个Kubernetes高可用集群;先声明一下、这里并没有用到自动化部署的相关内容。我们还是参考了之前的部署方案采用二进制的方式进行部署;和之前有一点区别的是、网络我们采用的是Calico;Kubernetes版本我们升级到了 v1.19.8。容器运行时还是使用的Docker、并没有适配Containerd容器运行时。感兴趣的同学可以自动切换到Containerd容器运行时(Containerd 实现了 kubernetes 的 Container Runtime Interface (CRI) 接口,提供容器运行时核心功能,如镜像管理、容器管理等,相比 dockerd 更加简单、健壮和可移植);下面我们一起来看看具体的操作步骤。

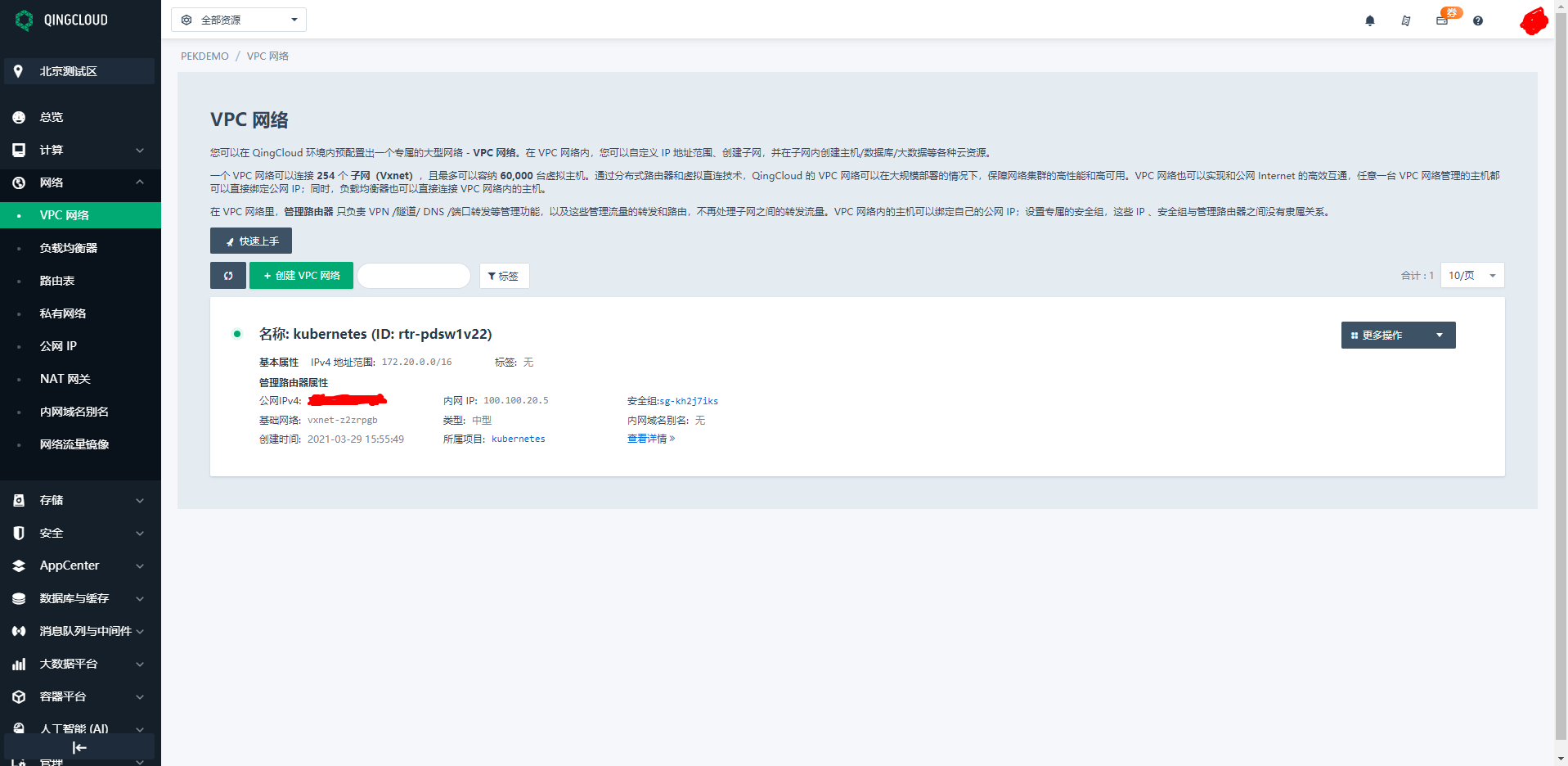

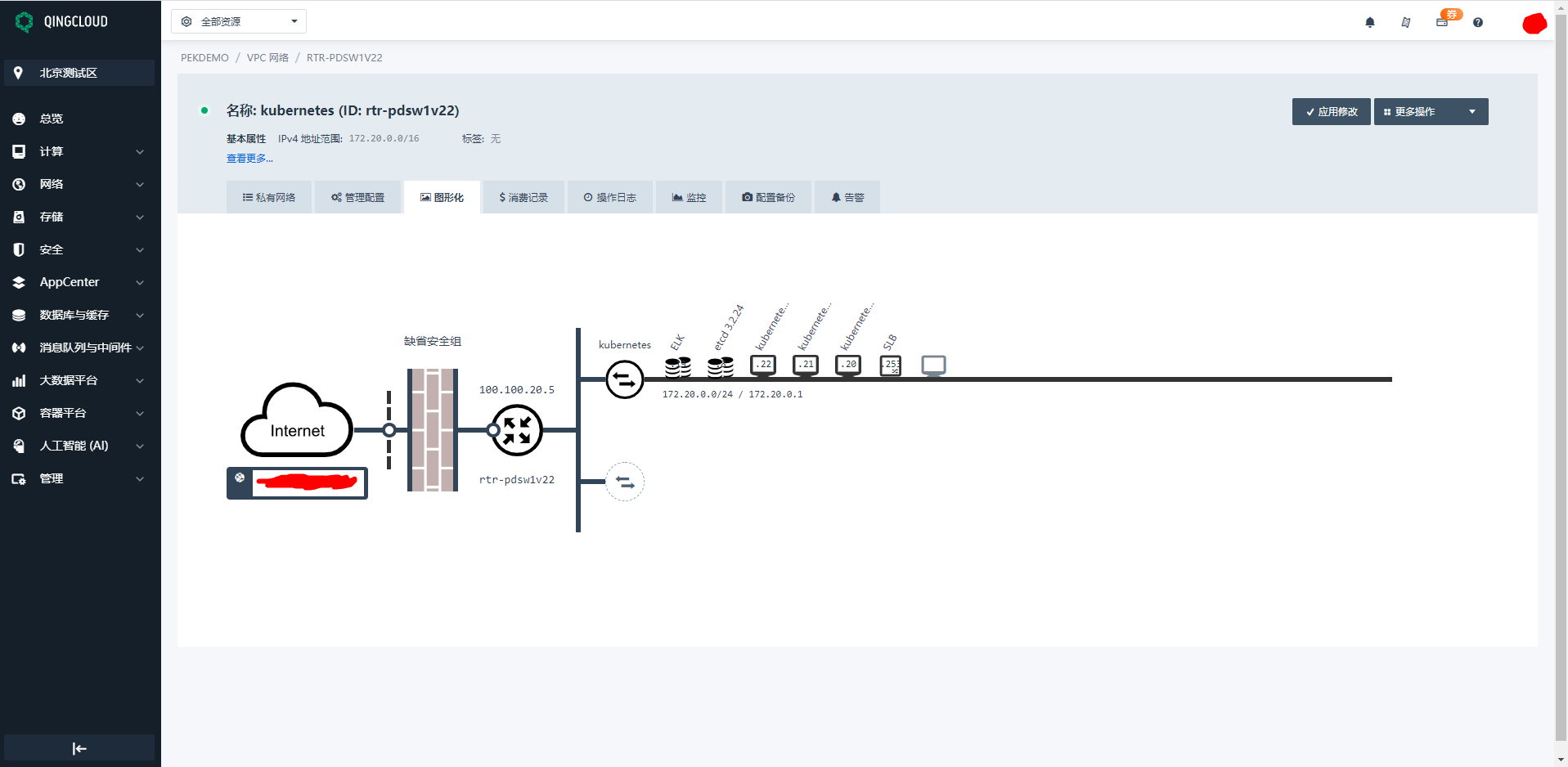

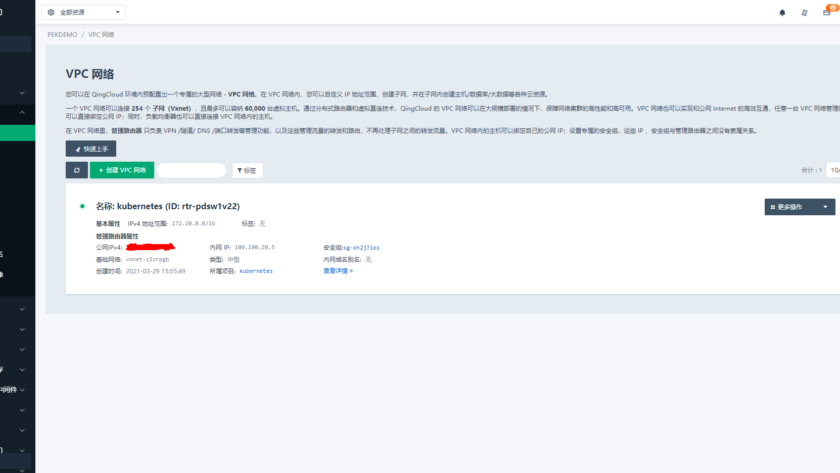

首先我们需要到青云上注册账号并购买相关资源、比如云主机、公网IP、VPC网络等资源。购买完成以后我们创建一个名为Kubernetes的项目、把所有资源全部放到Kubernetes项目中去进行管理。我们自定义VPC网络为 172.20.0.0/24网段、并给云主机配置IP地址和DNS信息;然后我们通过青云自带的VPN服务连接到Kubernetes项目即可管理所有资源啦(青云相关操作请参考官方文档、这里就不再详细描述了;这里说一点就是配置完VPN之后记得在安全组中开放对应端口)。

1、组件版本说明(参考)

| 序号 | 组件 | 版本 | 备注 |

|---|---|---|---|

| 1 | kubernetes | v1.19.8 | |

| 2 | etcd | v3.4.15 | |

| 3 | docker | v19.03.8 | |

| 4 | calico | v3.18.1 | |

| 5 | coredns | v1.8.3 | |

| 6 | dashboard | v2.2.2 | |

| 7 | k8s-prometheus-adapter | v0.5.0 | |

| 8 | prometheus-operator | v0.38.0 | |

| 9 | prometheus | v2.15.2 | |

| 10 | elasticsearch、kibana | v7.11.2 | |

| 11 | cni-plugins | v3.18.1 | |

| 12 | metrics-server | v0.4.2 | |

| 13 | weave | v2.8.1 | |

| 14 | kubeapps | v2.3.1 | |

| 15 | helm | v3.1.0 | |

| 16 | grafana | v1.13.0 | |

| 17 | traefik | v2.1 |

主要配置策略

kube-apiserver:

- 使用节点本地 nginx 4 层透明代理实现高可用;

- 关闭非安全端口 8080 和匿名访问;

- 在安全端口 6443 接收 https 请求;

- 严格的认证和授权策略 (x509、token、RBAC);

- 开启 bootstrap token 认证,支持 kubelet TLS bootstrapping;

- 使用 https 访问 kubelet、etcd,加密通信;

kube-controller-manager:

- 3 节点高可用;

- 关闭非安全端口,在安全端口 10252 接收 https 请求;

- 使用 kubeconfig 访问 apiserver 的安全端口;

- 自动 approve kubelet 证书签名请求 (CSR),证书过期后自动轮转;

- 各 controller 使用自己的 ServiceAccount 访问 apiserver;

kube-scheduler:

- 3 节点高可用;

- 使用 kubeconfig 访问 apiserver 的安全端口;

kubelet:

- 使用 kubeadm 动态创建 bootstrap token,而不是在 apiserver 中静态配置;

- 使用 TLS bootstrap 机制自动生成 client 和 server 证书,过期后自动轮转;

- 在 KubeletConfiguration 类型的 JSON 文件配置主要参数;

- 关闭只读端口,在安全端口 10250 接收 https 请求,对请求进行认证和授权,拒绝匿名访问和非授权访问;

- 使用 kubeconfig 访问 apiserver 的安全端口;

kube-proxy:

- 使用 kubeconfig 访问 apiserver 的安全端口;

- 在 KubeProxyConfiguration 类型的 JSON 文件配置主要参数;

- 使用 ipvs 代理模式;

集群插件:

- DNS:使用功能、性能更好的 coredns;

- Dashboard:支持登录认证;

- Metric:metrics-server,使用 https 访问 kubelet 安全端口;

- Log:Elasticsearch、Fluend、Kibana;

- Registry 镜像库:docker-registry、harbor;

2、系统初始化

集群规划

- Kubernetes-01:172.20.0.20

- Kubernetes-02:172.20.0.21

- Kubernetes-03:172.20.0.22

注:如果没有特殊说明,本小节所有操作需要在所有节点上执行本文档的初始化操作。

配置主机名

# 可以将下面的节点名称替换为自己的主机名称

hostnamectl set-hostname kubernetes-01

hostnamectl set-hostname kubernetes-02

hostnamectl set-hostname kubernetes-03

如果 DNS 不支持主机名称解析,还需要在每台机器的 /etc/hosts 文件中添加主机名和 IP 的对应关系:

cat >> /etc/hosts <<EOF

172.20.0.20 kubernetes-01

172.20.0.21 kubernetes-02

172.20.0.22 kubernetes-03

EOF

然后退出,重新登录 root 账号,可以看到主机名生效。

添加节点SSH互信

本操作只需要在 Kubernetes-01 节点上进行,设置 root 账户可以无密码登录所有节点:

ssh-keygen -t rsa

ssh-copy-id root@kubernetes-01

ssh-copy-id root@kubernetes-02

ssh-copy-id root@kubernetes-03

更新PATH变量

echo 'PATH=/opt/k8s/bin:$PATH' >>/root/.bashrc && source /root/.bashrc

注:/opt/k8s/bin 目录保存本文档下载安装的程序。

安装依赖包

yum install -y epel-release

yum install -y chrony conntrack ipvsadm ipset jq iptables curl sysstat libseccomp wget socat git

注:本文档的 kube-proxy 使用 ipvs 模式,ipvsadm 为 ipvs 的管理工具; etcd 集群各机器需要时间同步,chrony 用于系统时间同步。

关闭防火墙

systemctl stop firewalld && systemctl disable firewalld

iptables -F && iptables -X && iptables -F -t nat && iptables -X -t nat && iptables -P FORWARD ACCEPT

注:关闭防火墙,清理防火墙规则,设置默认转发策略。

# 关闭swap分区

swapoff -a && sed -i '/ swap / s/^\(.*\)$/#\1/g' /etc/fstab

# 关闭SELinux

setenforce 0 && sed -i 's/^SELINUX=.*/SELINUX=disabled/' /etc/selinux/config

注:关闭 swap 分区,否则kubelet 会启动失败(可以设置 kubelet 启动参数 –fail-swap-on 为 false 关闭 swap 检查)。关闭 SELinux,否则 kubelet 挂载目录时可能报错 Permission denied。

优化内核参数

cat > kubernetes.conf <<EOF

net.bridge.bridge-nf-call-iptables=1

net.bridge.bridge-nf-call-ip6tables=1

net.ipv4.ip_forward=1

net.ipv4.tcp_tw_recycle=0

net.ipv4.neigh.default.gc_thresh1=1024

net.ipv4.neigh.default.gc_thresh1=2048

net.ipv4.neigh.default.gc_thresh1=4096

vm.swappiness=0

vm.overcommit_memory=1

vm.panic_on_oom=0

fs.inotify.max_user_instances=8192

fs.inotify.max_user_watches=1048576

fs.file-max=52706963

fs.nr_open=52706963

net.ipv6.conf.all.disable_ipv6=1

net.netfilter.nf_conntrack_max=2310720

EOF

cp kubernetes.conf /etc/sysctl.d/kubernetes.conf

sysctl -p /etc/sysctl.d/kubernetes.conf

注:关闭 tcp_tw_recycle,否则与 NAT 冲突,可能导致服务不通。

配置系统时区及时钟同步

# 配置系统时区

[root@kubernetes-01 work]# timedatectl set-timezone Asia/Shanghai

# 查看同步状态

[root@kubernetes-01 work]# timedatectl status

Local time: Tue 2021-03-30 14:17:11 CST

Universal time: Tue 2021-03-30 06:17:11 UTC

RTC time: Tue 2021-03-30 06:17:12

Time zone: Asia/Shanghai (CST, +0800)

NTP enabled: yes

NTP synchronized: yes

RTC in local TZ: no

DST active: n/a

[root@kubernetes-01 work]#

# 将当前的 UTC 时间写入硬件时钟

[root@kubernetes-01 work]# timedatectl set-local-rtc 0

# 重启依赖于系统时间的服务

[root@kubernetes-01 work]# systemctl restart rsyslog

[root@kubernetes-01 work]# systemctl restart crond

# 关闭无关服务

[root@kubernetes-01 work]# systemctl stop postfix && systemctl disable postfix

# 创建接下来要使用的相关安装目录

[root@kubernetes-01 work]# mkdir -p /opt/k8s/{bin,work} /etc/{kubernetes,etcd}/cert

注:System clock synchronized: yes,表示时钟已同步; NTP service: active,表示开启了时钟同步服务。

分发集群配置参数

后续使用的环境变量都定义在文件 environment.sh 中,请根据自己的机器、网络情况修改:

#!/usr/bin/bash

# 生成 EncryptionConfig 所需的加密 key

export ENCRYPTION_KEY=$(head -c 32 /dev/urandom | base64)

# 集群各机器 IP 数组

export NODE_IPS=(172.20.0.20 172.20.0.21 172.20.0.22)

# 集群各 IP 对应的主机名数组

export NODE_NAMES=(kubernetes-01 kubernetes-02 kubernetes-03)

# etcd 集群服务地址列表

export ETCD_ENDPOINTS="https://172.20.0.20:2379,https://172.20.0.21:2379,https://172.20.0.22:2379"

# etcd 集群间通信的 IP 和端口

export ETCD_NODES="kubernetes-01=https://172.20.0.20:2380,kubernetes-02=https://172.20.0.21:2380,kubernetes-03=https://172.20.0.22:2380"

# kube-apiserver 的反向代理(kube-nginx)地址端口

export KUBE_APISERVER="https://127.0.0.1:8443"

# 节点间互联网络接口名称

export IFACE="eth0"

# etcd 数据目录

export ETCD_DATA_DIR="/data/k8s/etcd/data"

# etcd WAL 目录,建议是 SSD 磁盘分区,或者和 ETCD_DATA_DIR 不同的磁盘分区

export ETCD_WAL_DIR="/data/k8s/etcd/wal"

# k8s 各组件数据目录

export K8S_DIR="/data/k8s/k8s"

## DOCKER_DIR 和 CONTAINERD_DIR 二选一

# docker 数据目录

export DOCKER_DIR="/data/k8s/docker"

# flannel

export FLANNEL_ETCD_PREFIX=”/kubernetes/network”

# containerd 数据目录

# export CONTAINERD_DIR="/data/k8s/containerd"

## 以下参数一般不需要修改

# TLS Bootstrapping 使用的 Token,可以使用命令 head -c 16 /dev/urandom | od -An -t x | tr -d ' ' 生成

BOOTSTRAP_TOKEN="41f7e4ba8b7be874fcff18bf5cf41a7c"

# 最好使用 当前未用的网段 来定义服务网段和 Pod 网段

# 服务网段,部署前路由不可达,部署后集群内路由可达(kube-proxy 保证)

SERVICE_CIDR="10.254.0.0/16"

# Pod 网段,建议 /16 段地址,部署前路由不可达,部署后集群内路由可达(flanneld 保证)

CLUSTER_CIDR="172.30.0.0/16"

# 服务端口范围 (NodePort Range)

export NODE_PORT_RANGE="30000-32767"

# kubernetes 服务 IP (一般是 SERVICE_CIDR 中第一个IP)

export CLUSTER_KUBERNETES_SVC_IP="10.254.0.1"

# 集群 DNS 服务 IP (从 SERVICE_CIDR 中预分配)

export CLUSTER_DNS_SVC_IP="10.254.0.2"

# 集群 DNS 域名(末尾不带点号)

export CLUSTER_DNS_DOMAIN="cluster.local"

# 将二进制目录 /opt/k8s/bin 加到 PATH 中

export PATH=/opt/k8s/bin:$PATH

把上面的 environment.sh 文件修改完成之后保存到 /opt/k8s/bin/ 目录下面,然后拷贝到所有节点(修改完上面的文件之后再执行下面的操作):

source /opt/k8s/bin/environment.sh

for node_ip in ${NODE_IPS[@]}

do

echo ">>> ${node_ip}"

scp environment.sh root@${node_ip}:/opt/k8s/bin/

ssh root@${node_ip} "chmod +x /opt/k8s/bin/*"

done

[root@kubernetes-01 bin]# source /opt/k8s/bin/environment.sh

[root@kubernetes-01 bin]# for node_ip in ${NODE_IPS[@]}

> do

> echo ">>> ${node_ip}"

> scp environment.sh root@${node_ip}:/opt/k8s/bin/

> ssh root@${node_ip} "chmod +x /opt/k8s/bin/*"

> done

>>> 172.20.0.20

Warning: Permanently added '172.20.0.20' (ECDSA) to the list of known hosts.

environment.sh 100% 2275 6.0MB/s 00:00

Warning: Permanently added '172.20.0.20' (ECDSA) to the list of known hosts.

>>> 172.20.0.21

Warning: Permanently added '172.20.0.21' (ECDSA) to the list of known hosts.

environment.sh 100% 2275 2.4MB/s 00:00

Warning: Permanently added '172.20.0.21' (ECDSA) to the list of known hosts.

>>> 172.20.0.22

Warning: Permanently added '172.20.0.22' (ECDSA) to the list of known hosts.

environment.sh 100% 2275 2.6MB/s 00:00

Warning: Permanently added '172.20.0.22' (ECDSA) to the list of known hosts.

[root@kubernetes-01 bin]#

内核升级

CentOS 7.x 系统自带的 3.10.x 内核存在一些 Bugs,导致运行的 Docker、Kubernetes 不稳定,例如:

- 高版本的 docker(1.13 以后) 启用了 3.10 kernel 实验支持的 kernel memory account 功能(无法关闭),当节点压力大如频繁启动和停止容器时会导致 cgroup memory leak;

- 网络设备引用计数泄漏,会导致类似于报错:”kernel:unregister_netdevice: waiting for eth0 to become free. Usage count = 1″;

解决方案如下:

- 升级内核到 4.4.X 以上;

- 或者,手动编译内核,disable CONFIG_MEMCG_KMEM 特性;

- 或者,安装修复了该问题的 Docker 18.09.1 及以上的版本。但由于 kubelet 也会设置 kmem(它 vendor 了 runc),所以需要重新编译 kubelet 并指定 GOFLAGS=”-tags=nokmem”;

git clone --branch v1.14.1 --single-branch --depth 1 https://github.com/kubernetes/kubernetes

cd kubernetes

KUBE_GIT_VERSION=v1.14.1 ./build/run.sh make kubelet GOFLAGS="-tags=nokmem"

这里采用升级内核的解决办法:

rpm -Uvh http://www.elrepo.org/elrepo-release-7.0-3.el7.elrepo.noarch.rpm

# 安装完成后检查 /boot/grub2/grub.cfg 中对应内核 menuentry 中是否包含 initrd16 配置,如果没有,再安装一次!

yum --enablerepo=elrepo-kernel install -y kernel-lt

# 设置开机从新内核启动

grub2-set-default 0

# 执行完上面所有的操作之后我们就可以重启所有主机了

sync

reboot

本小节参考文档:

- 系统内核相关参数参考:https://docs.openshift.com/enterprise/3.2/admin_guide/overcommit.html

- 3.10.x 内核 kmem bugs 相关的讨论和解决办法:

- https://github.com/kubernetes/kubernetes/issues/61937

- https://support.mesosphere.com/s/article/Critical-Issue-KMEM-MSPH-2018-0006

- https://pingcap.com/image/try-to-fix-two-linux-kernel-bugs-while-testing-tidb-operator-in-k8s/

3、创建CA证书和秘钥

为确保安全,kubernetes 系统各组件需要使用 x509 证书对通信进行加密和认证。 CA (Certificate Authority) 是自签名的根证书,用来签名后续创建的其它证书。 CA 证书是集群所有节点共享的,只需要创建一次,后续用它签名其它所有证书。 本小节使用 CloudFlare 的 PKI 工具集 cfssl 创建所有证书。

注:如果没有特殊指明,本小节所有操作均在 Kubernetes-01 节点上执行。

安装 cfssl 工具集

cfssl github项目地址:https://github.com/cloudflare/cfssl

sudo mkdir -p /opt/k8s/cert && cd /opt/k8s/work

wget https://github.com/cloudflare/cfssl/releases/download/v1.5.0/cfssl_1.5.0_linux_amd64

wget https://github.com/cloudflare/cfssl/releases/download/v1.5.0/cfssljson_1.5.0_linux_amd64

wget https://github.com/cloudflare/cfssl/releases/download/v1.5.0/cfssl-certinfo_1.5.0_linux_amd64

mv cfssl_1.5.0_linux_amd64 /opt/k8s/bin/cfssl

mv cfssljson_1.5.0_linux_amd64 /opt/k8s/bin/cfssljson

mv cfssl-certinfo_1.5.0_linux_amd64 /opt/k8s/bin/cfssl-certinfo

chmod +x /opt/k8s/bin/* && export PATH=/opt/k8s/bin:$PATH

创建配置文件

CA 配置文件用于配置根证书的使用场景 (profile) 和具体参数 (usage,过期时间、服务端认证、客户端认证、加密等):

cd /opt/k8s/work

cat > ca-config.json <<EOF

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"kubernetes": {

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

],

"expiry": "876000h"

}

}

}

}

EOF

- signing:表示该证书可用于签名其它证书(生成的 ca.pem 证书中 CA=TRUE);

- server auth:表示 client 可以用该该证书对 server 提供的证书进行验证;

- client auth:表示 server 可以用该该证书对 client 提供的证书进行验证;

- “expiry”: “876000h”:证书有效期设置为 100 年;

创建证书签名请求文件

cd /opt/k8s/work

cat > ca-csr.json <<EOF

{

"CN": "kubernetes-ca",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "k8s",

"OU": "z0ukun"

}

],

"ca": {

"expiry": "876000h"

}

}

EOF

- CN:Common Name:kube-apiserver 从证书中提取该字段作为请求的用户名 (User Name),浏览器使用该字段验证网站是否合法;

- O:Organization:kube-apiserver 从证书中提取该字段作为请求用户所属的组 (Group);

- kube-apiserver 将提取的 User、Group 作为 RBAC 授权的用户标识;

注:不同证书 csr 文件的 CN、C、ST、L、O、OU 组合必须不同,否则可能出现 PEER’S CERTIFICATE HAS AN INVALID SIGNATURE 错误;后续创建证书的 csr 文件时,CN 都不相同(C、ST、L、O、OU 相同),以达到区分的目的。

生成CA证书和私钥

[root@kubernetes-01 work]# cfssl gencert -initca ca-csr.json | cfssljson -bare ca

ls ca*2021/03/30 14:07:56 [INFO] generating a new CA key and certificate from CSR

2021/03/30 14:07:56 [INFO] generate received request

2021/03/30 14:07:56 [INFO] received CSR

2021/03/30 14:07:56 [INFO] generating key: rsa-2048

2021/03/30 14:07:57 [INFO] encoded CSR

2021/03/30 14:07:57 [INFO] signed certificate with serial number 11321818047568877525981512325340936770890720652

[root@kubernetes-01 work]# ls ca*

ca-config.json ca.csr ca-csr.json ca-key.pem ca.pem

分发证书文件

cd /opt/k8s/work

source /opt/k8s/bin/environment.sh

for node_ip in ${NODE_IPS[@]}

do

echo ">>> ${node_ip}"

ssh root@${node_ip} "mkdir -p /etc/kubernetes/cert"

scp ca*.pem ca-config.json root@${node_ip}:/etc/kubernetes/cert

done

[root@kubernetes-01 work]# source /opt/k8s/bin/environment.sh

[root@kubernetes-01 work]# for node_ip in ${NODE_IPS[@]}

> do

> echo ">>> ${node_ip}"

> ssh root@${node_ip} "mkdir -p /etc/kubernetes/cert"

> scp ca*.pem ca-config.json root@${node_ip}:/etc/kubernetes/cert

> done

>>> 172.20.0.20

Warning: Permanently added '172.20.0.20' (ECDSA) to the list of known hosts.

Warning: Permanently added '172.20.0.20' (ECDSA) to the list of known hosts.

ca-key.pem 100% 1675 930.7KB/s 00:00

ca.pem 100% 1322 2.4MB/s 00:00

ca-config.json 100% 293 556.0KB/s 00:00

>>> 172.20.0.21

Warning: Permanently added '172.20.0.21' (ECDSA) to the list of known hosts.

Warning: Permanently added '172.20.0.21' (ECDSA) to the list of known hosts.

ca-key.pem 100% 1675 998.5KB/s 00:00

ca.pem 100% 1322 1.7MB/s 00:00

ca-config.json 100% 293 433.7KB/s 00:00

>>> 172.20.0.22

Warning: Permanently added '172.20.0.22' (ECDSA) to the list of known hosts.

Warning: Permanently added '172.20.0.22' (ECDSA) to the list of known hosts.

ca-key.pem 100% 1675 870.4KB/s 00:00

ca.pem 100% 1322 1.7MB/s 00:00

ca-config.json 100% 293 305.5KB/s 00:00

[root@kubernetes-01 work]#

4、部署 Kubectl 命令行工具

注:本小节介绍安装和配置 kubernetes 命令行管理工具 kubectl 的步骤。本小节只需要部署一次,生成的 kubeconfig 文件是通用的,可以拷贝到需要执行 kubectl 命令的机器的 ~/.kube/config 位置。

注:如果没有特殊指明,本小节所有操作均在 Kubernetes-01 节点上执行。

下载Kubectl二进制文件

cd /opt/k8s/work

# 自行解决翻墙下载问题

wget https://dl.k8s.io/v1.19.8/kubernetes-client-linux-amd64.tar.gz

tar -xzvf kubernetes-client-linux-amd64.tar.gz

[root@kubernetes-01 work]# wget https://dl.k8s.io/v1.19.8/kubernetes-client-linux-amd64.tar.gz

--2021-03-30 14:08:13-- https://dl.k8s.io/v1.19.8/kubernetes-client-linux-amd64.tar.gz

Resolving dl.k8s.io (dl.k8s.io)... 34.107.204.206, 2600:1901:0:26f3::

Connecting to dl.k8s.io (dl.k8s.io)|34.107.204.206|:443... connected.

HTTP request sent, awaiting response... 302 Moved Temporarily

Location: https://storage.googleapis.com/kubernetes-release/release/v1.19.8/kubernetes-client-linux-amd64.tar.gz [following]

--2021-03-30 14:08:16-- https://storage.googleapis.com/kubernetes-release/release/v1.19.8/kubernetes-client-linux-amd64.tar.gz

Resolving storage.googleapis.com (storage.googleapis.com)... 172.217.27.144, 172.217.160.80, 216.58.200.48, ...

Connecting to storage.googleapis.com (storage.googleapis.com)|172.217.27.144|:443... connected.

^C

[root@kubernetes-01 work]# tar -zxvf kubernetes-client-linux-amd64.tar.gz

kubernetes/

kubernetes/client/

kubernetes/client/bin/

kubernetes/client/bin/kubectl

[root@kubernetes-01 work]#

分发到所有使用 kubectl 工具的节点:

cd /opt/k8s/work

source /opt/k8s/bin/environment.sh

for node_ip in ${NODE_IPS[@]}

do

echo ">>> ${node_ip}"

scp kubernetes/client/bin/kubectl root@${node_ip}:/opt/k8s/bin/

ssh root@${node_ip} "chmod +x /opt/k8s/bin/*"

done

[root@kubernetes-01 work]# source /opt/k8s/bin/environment.sh

[root@kubernetes-01 work]# for node_ip in ${NODE_IPS[@]}

> do

> echo ">>> ${node_ip}"

> scp kubernetes/client/bin/kubectl root@${node_ip}:/opt/k8s/bin/

> ssh root@${node_ip} "chmod +x /opt/k8s/bin/*"

> done

>>> 172.20.0.20

Warning: Permanently added '172.20.0.20' (ECDSA) to the list of known hosts.

kubectl 100% 41MB 122.7MB/s 00:00

Warning: Permanently added '172.20.0.20' (ECDSA) to the list of known hosts.

>>> 172.20.0.21

Warning: Permanently added '172.20.0.21' (ECDSA) to the list of known hosts.

kubectl 100% 41MB 93.2MB/s 00:00

Warning: Permanently added '172.20.0.21' (ECDSA) to the list of known hosts.

>>> 172.20.0.22

Warning: Permanently added '172.20.0.22' (ECDSA) to the list of known hosts.

kubectl 100% 41MB 94.6MB/s 00:00

Warning: Permanently added '172.20.0.22' (ECDSA) to the list of known hosts.

创建admin证书和私钥

kubectl 使用 https 协议与 kube-apiserver 进行安全通信,kube-apiserver 对 kubectl 请求包含的证书进行认证和授权。kubectl 后续用于集群管理,所以这里创建具有最高权限的 admin 证书。

创建证书签名请求:

cd /opt/k8s/work

cat > admin-csr.json <<EOF

{

"CN": "admin",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "system:masters",

"OU": "z0ukun"

}

]

}

EOF

- O: system:masters:kube-apiserver 收到使用该证书的客户端请求后,为请求添加组(Group)认证标识 system:masters;

- 预定义的 ClusterRoleBinding cluster-admin 将 Group system:masters 与 Role cluster-admin 绑定,该 Role 授予操作集群所需的最高权限;

- 该证书只会被 kubectl 当做 client 证书使用,所以 hosts 字段为空。

生成证书和私钥:

cd /opt/k8s/work

cfssl gencert -ca=/opt/k8s/work/ca.pem \

-ca-key=/opt/k8s/work/ca-key.pem \

-config=/opt/k8s/work/ca-config.json \

-profile=kubernetes admin-csr.json | cfssljson -bare admin

ls admin*

[root@kubernetes-01 work]# cfssl gencert -ca=/opt/k8s/work/ca.pem \

> -ca-key=/opt/k8s/work/ca-key.pem \

> -config=/opt/k8s/work/ca-config.json \

> -profile=kubernetes admin-csr.json | cfssljson -bare admin

ls admin*2021/03/30 14:10:04 [INFO] generate received request

2021/03/30 14:10:04 [INFO] received CSR

2021/03/30 14:10:04 [INFO] generating key: rsa-2048

2021/03/30 14:10:04 [INFO] encoded CSR

2021/03/30 14:10:04 [INFO] signed certificate with serial number 263807722639968682310482022034641272658670741851

2021/03/30 14:10:04 [WARNING] This certificate lacks a "hosts" field. This makes it unsuitable for

websites. For more information see the Baseline Requirements for the Issuance and Management

of Publicly-Trusted Certificates, v.1.1.6, from the CA/Browser Forum (https://cabforum.org);

specifically, section 10.2.3 ("Information Requirements").

[root@kubernetes-01 work]# ls admin*

admin.csr admin-csr.json admin-key.pem admin.pem

[root@kubernetes-01 work]#

注:忽略警告消息: [WARNING] This certificate lacks a “hosts” field。

创建 kubeconfig 文件

kubectl 使用 kubeconfig 文件访问 apiserver,该文件包含 kube-apiserver 的地址和认证信息(CA 证书和客户端证书):

cd /opt/k8s/work

source /opt/k8s/bin/environment.sh

# 设置集群参数

kubectl config set-cluster kubernetes \

--certificate-authority=/opt/k8s/work/ca.pem \

--embed-certs=true \

--server=https://${NODE_IPS[0]}:6443 \

--kubeconfig=kubectl.kubeconfig

# 设置客户端认证参数

kubectl config set-credentials admin \

--client-certificate=/opt/k8s/work/admin.pem \

--client-key=/opt/k8s/work/admin-key.pem \

--embed-certs=true \

--kubeconfig=kubectl.kubeconfig

# 设置上下文参数

kubectl config set-context kubernetes \

--cluster=kubernetes \

--user=admin \

--kubeconfig=kubectl.kubeconfig

# 设置默认上下文

kubectl config use-context kubernetes --kubeconfig=kubectl.kubeconfig

- –certificate-authority:验证 kube-apiserver 证书的根证书;

- –client-certificate、–client-key:刚生成的 admin 证书和私钥,与 kube-apiserver https 通信时使用;

- –embed-certs=true:将 ca.pem 和 admin.pem 证书内容嵌入到生成的 kubectl.kubeconfig 文件中(否则,写入的是证书文件路径,后续拷贝 kubeconfig 到其它机器时,还需要单独拷贝证书文件,不方便。);

- –server:指定 kube-apiserver 的地址,这里指向第一个节点上的服务。

分发 kubeconfig 文件

分发到所有使用 kubectl 命令的节点:

cd /opt/k8s/work

source /opt/k8s/bin/environment.sh

for node_ip in ${NODE_IPS[@]}

do

echo ">>> ${node_ip}"

ssh root@${node_ip} "mkdir -p ~/.kube"

scp kubectl.kubeconfig root@${node_ip}:~/.kube/config

done

[root@kubernetes-01 work]# source /opt/k8s/bin/environment.sh

[root@kubernetes-01 work]# for node_ip in ${NODE_IPS[@]}

> do

> echo ">>> ${node_ip}"

> ssh root@${node_ip} "mkdir -p ~/.kube"

> scp kubectl.kubeconfig root@${node_ip}:~/.kube/config

> done

>>> 172.20.0.20

Warning: Permanently added '172.20.0.20' (ECDSA) to the list of known hosts.

Warning: Permanently added '172.20.0.20' (ECDSA) to the list of known hosts.

kubectl.kubeconfig 100% 6223 6.6MB/s 00:00

>>> 172.20.0.21

Warning: Permanently added '172.20.0.21' (ECDSA) to the list of known hosts.

Warning: Permanently added '172.20.0.21' (ECDSA) to the list of known hosts.

kubectl.kubeconfig 100% 6223 2.2MB/s 00:00

>>> 172.20.0.22

Warning: Permanently added '172.20.0.22' (ECDSA) to the list of known hosts.

Warning: Permanently added '172.20.0.22' (ECDSA) to the list of known hosts.

kubectl.kubeconfig 100% 6223 2.3MB/s 00:00

[root@kubernetes-01 work]#

5、部署Etcd集群

etcd 是基于 Raft 的分布式 KV 存储系统,由 CoreOS 开发,常用于服务发现、共享配置以及并发控制(如 leader 选举、分布式锁等);kubernetes 使用 etcd 集群持久化存储所有 API 对象、运行数据。

本小节介绍如何部署一个三节点高可用 etcd 集群的步骤:

- 下载和分发 etcd 二进制文件;

- 创建 etcd 集群各节点的 x509 证书,用于加密客户端(如 etcdctl) 与 etcd 集群、etcd 集群之间的通信;

- 创建 etcd 的 systemd unit 文件,配置服务参数;

- 检查集群工作状态;

etcd 集群节点名称和 IP 如下:

- Kubernetes-01:172.20.0.20

- Kubernetes-02:172.20.0.21

- Kubernetes-03:172.20.0.22

注:如果没有特殊指明,本小节所有操作均在 Kubernetes-01 节点上执行。

下载etcd二进制文件

etcd下载地址:https://github.com/etcd-io/etcd/releases

cd /opt/k8s/work

wget https://github.com/coreos/etcd/releases/download/v3.4.15/etcd-v3.4.15-linux-amd64.tar.gz

tar -xvf etcd-v3.4.15-linux-amd64.tar.gz

分发二进制文件到集群所有节点:

cd /opt/k8s/work

source /opt/k8s/bin/environment.sh

for node_ip in ${NODE_IPS[@]}

do

echo ">>> ${node_ip}"

scp /opt/k8s/work/etcd-v3.4.15-linux-amd64/etcd* root@${node_ip}:/opt/k8s/bin

ssh root@${node_ip} "chmod +x /opt/k8s/bin/*"

done

[root@kubernetes-01 work]# for node_ip in ${NODE_IPS[@]}

> do

> echo ">>> ${node_ip}"

> scp /opt/k8s/work/etcd-v3.4.15-linux-amd64/etcd* root@${node_ip}:/opt/k8s/bin

> ssh root@${node_ip} "chmod +x /opt/k8s/bin/*"

> done

>>> 172.20.0.20

Warning: Permanently added '172.20.0.20' (ECDSA) to the list of known hosts.

etcd 100% 23MB 133.4MB/s 00:00

etcdctl 100% 17MB 144.4MB/s 00:00

Warning: Permanently added '172.20.0.20' (ECDSA) to the list of known hosts.

>>> 172.20.0.21

Warning: Permanently added '172.20.0.21' (ECDSA) to the list of known hosts.

etcd 100% 23MB 120.3MB/s 00:00

etcdctl 100% 17MB 134.3MB/s 00:00

Warning: Permanently added '172.20.0.21' (ECDSA) to the list of known hosts.

>>> 172.20.0.22

Warning: Permanently added '172.20.0.22' (ECDSA) to the list of known hosts.

etcd 100% 23MB 134.1MB/s 00:00

etcdctl 100% 17MB 133.9MB/s 00:00

Warning: Permanently added '172.20.0.22' (ECDSA) to the list of known hosts.

[root@kubernetes-01 work]#

创建etcd证书和私钥

创建证书签名请求:

cd /opt/k8s/work

cat > etcd-csr.json <<EOF

{

"CN": "etcd",

"hosts": [

"127.0.0.1",

"172.20.0.20",

"172.20.0.21",

"172.20.0.22"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "k8s",

"OU": "z0ukun"

}

]

}

EOF

- hosts:指定授权使用该证书的 etcd 节点 IP 列表,需要将 etcd 集群所有节点 IP 都列在其中。

生成证书和私钥:

cd /opt/k8s/work

cfssl gencert -ca=/opt/k8s/work/ca.pem \

-ca-key=/opt/k8s/work/ca-key.pem \

-config=/opt/k8s/work/ca-config.json \

-profile=kubernetes etcd-csr.json | cfssljson -bare etcd

ls etcd*pem

[root@kubernetes-01 work]# cfssl gencert -ca=/opt/k8s/work/ca.pem \

> -ca-key=/opt/k8s/work/ca-key.pem \

> -config=/opt/k8s/work/ca-config.json \

> -profile=kubernetes etcd-csr.json | cfssljson -bare etcd

2021/03/31 13:23:06 [INFO] generate received request

2021/03/31 13:23:06 [INFO] received CSR

2021/03/31 13:23:06 [INFO] generating key: rsa-2048

2021/03/31 13:23:07 [INFO] encoded CSR

2021/03/31 13:23:07 [INFO] signed certificate with serial number 702521290657600647093264045094244876594460352842

[root@kubernetes-01 work]# ls etcd*pem

etcd-key.pem etcd.pem

[root@kubernetes-01 work]#

分发生成的证书和私钥到各 etcd 节点:

cd /opt/k8s/work

source /opt/k8s/bin/environment.sh

for node_ip in ${NODE_IPS[@]}

do

echo ">>> ${node_ip}"

ssh root@${node_ip} "mkdir -p /etc/etcd/cert"

scp etcd*.pem root@${node_ip}:/etc/etcd/cert/

done

[root@kubernetes-01 work]# source /opt/k8s/bin/environment.sh

[root@kubernetes-01 work]# for node_ip in ${NODE_IPS[@]}

> do

> echo ">>> ${node_ip}"

> ssh root@${node_ip} "mkdir -p /etc/etcd/cert"

> scp etcd*.pem root@${node_ip}:/etc/etcd/cert/

> done

>>> 172.20.0.20

Warning: Permanently added '172.20.0.20' (ECDSA) to the list of known hosts.

Warning: Permanently added '172.20.0.20' (ECDSA) to the list of known hosts.

etcd-key.pem 100% 1675 1.9MB/s 00:00

etcd.pem 100% 1440 1.8MB/s 00:00

>>> 172.20.0.21

Warning: Permanently added '172.20.0.21' (ECDSA) to the list of known hosts.

Warning: Permanently added '172.20.0.21' (ECDSA) to the list of known hosts.

etcd-key.pem 100% 1675 1.5MB/s 00:00

etcd.pem 100% 1440 137.6KB/s 00:00

>>> 172.20.0.22

Warning: Permanently added '172.20.0.22' (ECDSA) to the list of known hosts.

Warning: Permanently added '172.20.0.22' (ECDSA) to the list of known hosts.

etcd-key.pem 100% 1675 1.7MB/s 00:00

etcd.pem 100% 1440 1.6MB/s 00:00

[root@kubernetes-01 work]#

创建etcd的systemd unit模板文件

cd /opt/k8s/work

source /opt/k8s/bin/environment.sh

cat > etcd.service.template <<EOF

[Unit]

Description=Etcd Server

After=network.target

After=network-online.target

Wants=network-online.target

Documentation=https://github.com/coreos

[Service]

Type=notify

WorkingDirectory=${ETCD_DATA_DIR}

ExecStart=/opt/k8s/bin/etcd \\

--data-dir=${ETCD_DATA_DIR} \\

--wal-dir=${ETCD_WAL_DIR} \\

--name=##NODE_NAME## \\

--cert-file=/etc/etcd/cert/etcd.pem \\

--key-file=/etc/etcd/cert/etcd-key.pem \\

--trusted-ca-file=/etc/kubernetes/cert/ca.pem \\

--peer-cert-file=/etc/etcd/cert/etcd.pem \\

--peer-key-file=/etc/etcd/cert/etcd-key.pem \\

--peer-trusted-ca-file=/etc/kubernetes/cert/ca.pem \\

--peer-client-cert-auth \\

--client-cert-auth \\

--listen-peer-urls=https://##NODE_IP##:2380 \\

--initial-advertise-peer-urls=https://##NODE_IP##:2380 \\

--listen-client-urls=https://##NODE_IP##:2379,http://127.0.0.1:2379 \\

--advertise-client-urls=https://##NODE_IP##:2379 \\

--initial-cluster-token=etcd-cluster-0 \\

--initial-cluster=${ETCD_NODES} \\

--initial-cluster-state=new \\

--auto-compaction-mode=periodic \\

--auto-compaction-retention=1 \\

--max-request-bytes=33554432 \\

--quota-backend-bytes=6442450944 \\

--heartbeat-interval=250 \\

--election-timeout=2000

Restart=on-failure

RestartSec=5

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF

- WorkingDirectory、–data-dir:指定工作目录和数据目录为 ${ETCD_DATA_DIR},需在启动服务前创建这个目录;

- –wal-dir:指定 wal 目录,为了提高性能,一般使用 SSD 或者和 –data-dir 不同的磁盘;

- –name:指定节点名称,当 –initial-cluster-state 值为 new 时,–name 的参数值必须位于 –initial-cluster 列表中;

- –cert-file、–key-file:etcd server 与 client 通信时使用的证书和私钥;

- –trusted-ca-file:签名 client 证书的 CA 证书,用于验证 client 证书;

- –peer-cert-file、–peer-key-file:etcd 与 peer 通信使用的证书和私钥;

- –peer-trusted-ca-file:签名 peer 证书的 CA 证书,用于验证 peer 证书。

为各节点创建和分发 etcd systemd unit 文件

替换模板文件中的变量,为各节点创建 systemd unit 文件:

cd /opt/k8s/work

source /opt/k8s/bin/environment.sh

for (( i=0; i < 3; i++ ))

do

sed -e "s/##NODE_NAME##/${NODE_NAMES[i]}/" -e "s/##NODE_IP##/${NODE_IPS[i]}/" etcd.service.template > etcd-${NODE_IPS[i]}.service

done

ls *.service

[root@kubernetes-01 work]# source /opt/k8s/bin/environment.sh

[root@kubernetes-01 work]# for (( i=0; i < 3; i++ ))

> do

> sed -e "s/##NODE_NAME##/${NODE_NAMES[i]}/" -e "s/##NODE_IP##/${NODE_IPS[i]}/" etcd.service.template > etcd-${NODE_IPS[i]}.service

> done

[root@kubernetes-01 work]# ls *.service

etcd-172.20.0.20.service etcd-172.20.0.21.service etcd-172.20.0.22.service

[root@kubernetes-01 work]#

- NODE_NAMES 和 NODE_IPS 为相同长度的 bash 数组,分别为节点名称和对应的 IP。

分发生成的 systemd unit 文件:

cd /opt/k8s/work

source /opt/k8s/bin/environment.sh

for node_ip in ${NODE_IPS[@]}

do

echo ">>> ${node_ip}"

scp etcd-${node_ip}.service root@${node_ip}:/etc/systemd/system/etcd.service

done

[root@kubernetes-01 work]# source /opt/k8s/bin/environment.sh

[root@kubernetes-01 work]# for node_ip in ${NODE_IPS[@]}

> do

> echo ">>> ${node_ip}"

> scp etcd-${node_ip}.service root@${node_ip}:/etc/systemd/system/etcd.service

> done

>>> 172.20.0.20

Warning: Permanently added '172.20.0.20' (ECDSA) to the list of known hosts.

etcd-172.20.0.20.service 100% 1392 2.1MB/s 00:00

>>> 172.20.0.21

Warning: Permanently added '172.20.0.21' (ECDSA) to the list of known hosts.

etcd-172.20.0.21.service 100% 1392 1.5MB/s 00:00

>>> 172.20.0.22

Warning: Permanently added '172.20.0.22' (ECDSA) to the list of known hosts.

etcd-172.20.0.22.service 100% 1392 919.0KB/s 00:00

[root@kubernetes-01 work]#

启动etcd服务

cd /opt/k8s/work

source /opt/k8s/bin/environment.sh

for node_ip in ${NODE_IPS[@]}

do

echo ">>> ${node_ip}"

ssh root@${node_ip} "mkdir -p ${ETCD_DATA_DIR} ${ETCD_WAL_DIR}"

ssh root@${node_ip} "systemctl daemon-reload && systemctl enable etcd && systemctl restart etcd " &

done

[root@kubernetes-01 work]# source /opt/k8s/bin/environment.sh

[root@kubernetes-01 work]# for node_ip in ${NODE_IPS[@]}

> do

> echo ">>> ${node_ip}"

> ssh root@${node_ip} "mkdir -p ${ETCD_DATA_DIR} ${ETCD_WAL_DIR}"

> ssh root@${node_ip} "systemctl daemon-reload && systemctl enable etcd && systemctl restart etcd " &

> done

>>> 172.20.0.20

Warning: Permanently added '172.20.0.20' (ECDSA) to the list of known hosts.

[3] 1671

>>> 172.20.0.21

Warning: Permanently added '172.20.0.20' (ECDSA) to the list of known hosts.

Warning: Permanently added '172.20.0.21' (ECDSA) to the list of known hosts.

[4] 1680

>>> 172.20.0.22

Warning: Permanently added '172.20.0.22' (ECDSA) to the list of known hosts.

Warning: Permanently added '172.20.0.21' (ECDSA) to the list of known hosts.

[5] 1708

Warning: Permanently added '172.20.0.22' (ECDSA) to the list of known hosts.

[root@kubernetes-01 work]#

[3] Done ssh root@${node_ip} "systemctl daemon-reload && systemctl enable etcd && systemctl restart etcd "

[4] Done ssh root@${node_ip} "systemctl daemon-reload && systemctl enable etcd && systemctl restart etcd "

[5]- Done ssh root@${node_ip} "systemctl daemon-reload && systemctl enable etcd && systemctl restart etcd "

[root@kubernetes-01 work]#

- 切记必须先创建 etcd 数据目录和工作目录;

- etcd 进程首次启动时会等待其它节点的 etcd 加入集群,命令 systemctl start etcd 会卡住一段时间,为正常现象。

检查启动结果

cd /opt/k8s/work

source /opt/k8s/bin/environment.sh

for node_ip in ${NODE_IPS[@]}

do

echo ">>> ${node_ip}"

ssh root@${node_ip} "systemctl status etcd|grep Active"

done

[root@kubernetes-01 work]# source /opt/k8s/bin/environment.sh

[root@kubernetes-01 work]# for node_ip in ${NODE_IPS[@]}

> do

> echo ">>> ${node_ip}"

> ssh root@${node_ip} "systemctl status etcd|grep Active"

> done

>>> 172.20.0.20

Warning: Permanently added '172.20.0.20' (ECDSA) to the list of known hosts.

Active: active (running) since Wed 2021-03-31 13:36:34 CST; 33s ago

>>> 172.20.0.21

Warning: Permanently added '172.20.0.21' (ECDSA) to the list of known hosts.

Active: active (running) since Wed 2021-03-31 13:36:34 CST; 33s ago

>>> 172.20.0.22

Warning: Permanently added '172.20.0.22' (ECDSA) to the list of known hosts.

Active: active (running) since Wed 2021-03-31 13:36:34 CST; 34s ago

[root@kubernetes-01 work]#

注:确保状态为 active (running),否则可以通过 journalctl -u etcd 查看日志,确认原因。

验证服务状态

部署完 etcd 集群后,在任一 etcd 节点上执行如下命令:

cd /opt/k8s/work

source /opt/k8s/bin/environment.sh

for node_ip in ${NODE_IPS[@]}

do

echo ">>> ${node_ip}"

/opt/k8s/bin/etcdctl \

--endpoints=https://${node_ip}:2379 \

--cacert=/etc/kubernetes/cert/ca.pem \

--cert=/etc/etcd/cert/etcd.pem \

--key=/etc/etcd/cert/etcd-key.pem endpoint health

done

- 3.4.X 版本的 etcd/etcdctl 默认启用了 V3 API,所以执行 etcdctl 命令时不需要再指定环境变量 ETCDCTL_API=3;

- 从 K8S 1.13 开始,不再支持 v2 版本的 etcd。

预期输出:

[root@kubernetes-01 work]# source /opt/k8s/bin/environment.sh

[root@kubernetes-01 work]# for node_ip in ${NODE_IPS[@]}

> do

> echo ">>> ${node_ip}"

> /opt/k8s/bin/etcdctl \

> --endpoints=https://${node_ip}:2379 \

> --cacert=/etc/kubernetes/cert/ca.pem \

> --cert=/etc/etcd/cert/etcd.pem \

> --key=/etc/etcd/cert/etcd-key.pem endpoint health

> done

>>> 172.20.0.20

https://172.20.0.20:2379 is healthy: successfully committed proposal: took = 11.520721ms

>>> 172.20.0.21

https://172.20.0.21:2379 is healthy: successfully committed proposal: took = 12.447712ms

>>> 172.20.0.22

https://172.20.0.22:2379 is healthy: successfully committed proposal: took = 12.52316ms

[root@kubernetes-01 work]#

输出均为 healthy 时表示集群服务正常。

查看当前的leader

source /opt/k8s/bin/environment.sh

/opt/k8s/bin/etcdctl \

-w table --cacert=/etc/kubernetes/cert/ca.pem \

--cert=/etc/etcd/cert/etcd.pem \

--key=/etc/etcd/cert/etcd-key.pem \

--endpoints=${ETCD_ENDPOINTS} endpoint status

输出信息如下:

[root@kubernetes-01 work]# source /opt/k8s/bin/environment.sh

[root@kubernetes-01 work]# /opt/k8s/bin/etcdctl \

> -w table --cacert=/etc/kubernetes/cert/ca.pem \

> --cert=/etc/etcd/cert/etcd.pem \

> --key=/etc/etcd/cert/etcd-key.pem \

> --endpoints=${ETCD_ENDPOINTS} endpoint status

+--------------------------+------------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+

| ENDPOINT | ID | VERSION | DB SIZE | IS LEADER | IS LEARNER | RAFT TERM | RAFT INDEX | RAFT APPLIED INDEX | ERRORS |

+--------------------------+------------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+

| https://172.20.0.20:2379 | 96ff53158e80760 | 3.4.15 | 20 kB | true | false | 148 | 18 | 18 | |

| https://172.20.0.21:2379 | a27a01e650530e07 | 3.4.15 | 20 kB | false | false | 148 | 18 | 18 | |

| https://172.20.0.22:2379 | f868e309e507a67b | 3.4.15 | 20 kB | false | false | 148 | 18 | 18 | |

+--------------------------+------------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+

[root@kubernetes-01 work]#

可见,当前的 leader 为 172.20.0.20。

6、部署 Master 节点

kubernetes master 节点运行如下组件:

- kube-apiserver

- kube-scheduler

- kube-controller-manager

kube-apiserver、kube-scheduler 和 kube-controller-manager 均以多实例模式运行:

- kube-scheduler 和 kube-controller-manager 会自动选举产生一个 leader 实例,其它实例处于阻塞模式,当 leader 挂了后,重新选举产生新的 leader,从而保证服务可用性;

- kube-apiserver 是无状态的,可以通过 kube-nginx 进行代理访问,从而保证服务可用性;

注:如果没有特殊指明,本小节所有操作均在 Kubernetes-01 节点上执行。

下载最新版本二进制文件

Kubernetes v1.19.8下载地址:https://github.com/kubernetes/kubernetes/tree/v1.19.8

cd /opt/k8s/work

# 自行解决翻墙问题

wget https://dl.k8s.io/v1.19.8/kubernetes-server-linux-amd64.tar.gz

tar -xzvf kubernetes-server-linux-amd64.tar.gz

cd kubernetes && tar -xzvf kubernetes-src.tar.gz

将二进制文件拷贝到所有 master 节点:

cd /opt/k8s/work

source /opt/k8s/bin/environment.sh

for node_ip in ${NODE_IPS[@]}

do

echo ">>> ${node_ip}"

scp kubernetes/server/bin/{apiextensions-apiserver,kube-apiserver,kube-controller-manager,kube-proxy,kube-scheduler,kubeadm,kubectl,kubelet,mounter} root@${node_ip}:/opt/k8s/bin/

ssh root@${node_ip} "chmod +x /opt/k8s/bin/*"

done

[root@kubernetes-01 work]# source /opt/k8s/bin/environment.sh

[root@kubernetes-01 work]# for node_ip in ${NODE_IPS[@]}

> do

> echo ">>> ${node_ip}"

> scp kubernetes/server/bin/{apiextensions-apiserver,kube-apiserver,kube-controller-manager,kube-proxy,kube-scheduler,kubeadm,kubectl,kubelet,mounter} root@${node_ip}:/opt/k8s/bin/

> ssh root@${node_ip} "chmod +x /opt/k8s/bin/*"

> done

>>> 172.20.0.20

Warning: Permanently added '172.20.0.20' (ECDSA) to the list of known hosts.

apiextensions-apiserver 100% 45MB 110.4MB/s 00:00

kube-apiserver 100% 110MB 124.0MB/s 00:00

kube-controller-manager 100% 102MB 122.9MB/s 00:00

kube-proxy 100% 37MB 111.7MB/s 00:00

kube-scheduler 100% 41MB 112.5MB/s 00:00

kubeadm 100% 37MB 113.0MB/s 00:00

kubectl 100% 41MB 141.4MB/s 00:00

kubelet 100% 105MB 144.7MB/s 00:00

mounter 100% 1596KB 121.0MB/s 00:00

Warning: Permanently added '172.20.0.20' (ECDSA) to the list of known hosts.

>>> 172.20.0.21

Warning: Permanently added '172.20.0.21' (ECDSA) to the list of known hosts.

apiextensions-apiserver 100% 45MB 102.8MB/s 00:00

kube-apiserver 100% 110MB 109.9MB/s 00:01

kube-controller-manager 100% 102MB 102.3MB/s 00:01

kube-proxy 100% 37MB 105.0MB/s 00:00

kube-scheduler 100% 41MB 96.5MB/s 00:00

kubeadm 100% 37MB 114.6MB/s 00:00

kubectl 100% 41MB 129.1MB/s 00:00

kubelet 100% 105MB 133.4MB/s 00:00

mounter 100% 1596KB 102.6MB/s 00:00

Warning: Permanently added '172.20.0.21' (ECDSA) to the list of known hosts.

>>> 172.20.0.22

Warning: Permanently added '172.20.0.22' (ECDSA) to the list of known hosts.

apiextensions-apiserver 100% 45MB 103.2MB/s 00:00

kube-apiserver 100% 110MB 109.9MB/s 00:01

kube-controller-manager 100% 102MB 109.2MB/s 00:00

kube-proxy 100% 37MB 102.9MB/s 00:00

kube-scheduler 100% 41MB 41.0MB/s 00:01

kubeadm 100% 37MB 108.8MB/s 00:00

kubectl 100% 41MB 119.0MB/s 00:00

kubelet 100% 105MB 111.5MB/s 00:00

mounter 100% 1596KB 86.1MB/s 00:00

Warning: Permanently added '172.20.0.22' (ECDSA) to the list of known hosts.

[root@kubernetes-01 work]#

6.1、APIServer集群

注:本小节讲解部署一个三实例 kube-apiserver 集群的步骤。如果没有特殊指明,本小节所有操作均在Kubernetes-01 节点上执行。

创建 kubernetes-master 证书和私钥

创建证书签名请求:

cd /opt/k8s/work

source /opt/k8s/bin/environment.sh

cat > kubernetes-csr.json <<EOF

{

"CN": "kubernetes-master",

"hosts": [

"127.0.0.1",

"172.20.0.20",

"172.20.0.21",

"172.20.0.22",

"${CLUSTER_KUBERNETES_SVC_IP}",

"kubernetes",

"kubernetes.default",

"kubernetes.default.svc",

"kubernetes.default.svc.cluster",

"kubernetes.default.svc.cluster.local.",

"kubernetes.default.svc.${CLUSTER_DNS_DOMAIN}."

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "k8s",

"OU": "z0ukun"

}

]

}

EOF

- hosts 字段指定授权使用该证书的 IP 和域名列表,这里列出了 master 节点 IP、kubernetes 服务的 IP 和域名;

生成证书和私钥:

cfssl gencert -ca=/opt/k8s/work/ca.pem \

-ca-key=/opt/k8s/work/ca-key.pem \

-config=/opt/k8s/work/ca-config.json \

-profile=kubernetes kubernetes-csr.json | cfssljson -bare kubernetes

ls kubernetes*pem

[root@kubernetes-01 work]# cfssl gencert -ca=/opt/k8s/work/ca.pem \

> -ca-key=/opt/k8s/work/ca-key.pem \

> -config=/opt/k8s/work/ca-config.json \

> -profile=kubernetes kubernetes-csr.json | cfssljson -bare kubernetes

2021/03/31 14:01:12 [INFO] generate received request

2021/03/31 14:01:12 [INFO] received CSR

2021/03/31 14:01:12 [INFO] generating key: rsa-2048

2021/03/31 14:01:13 [INFO] encoded CSR

2021/03/31 14:01:13 [INFO] signed certificate with serial number 161942050792348668140609684865799859487804413770

[root@kubernetes-01 work]# ls kubernetes*pem

kubernetes-key.pem kubernetes.pem

[root@kubernetes-01 work]#

将生成的证书和私钥文件拷贝到所有 master 节点:

cd /opt/k8s/work

source /opt/k8s/bin/environment.sh

for node_ip in ${NODE_IPS[@]}

do

echo ">>> ${node_ip}"

ssh root@${node_ip} "mkdir -p /etc/kubernetes/cert"

scp kubernetes*.pem root@${node_ip}:/etc/kubernetes/cert/

done

[root@kubernetes-01 work]# source /opt/k8s/bin/environment.sh

[root@kubernetes-01 work]# for node_ip in ${NODE_IPS[@]}

> do

> echo ">>> ${node_ip}"

> ssh root@${node_ip} "mkdir -p /etc/kubernetes/cert"

> scp kubernetes*.pem root@${node_ip}:/etc/kubernetes/cert/

> done

>>> 172.20.0.20

Warning: Permanently added '172.20.0.20' (ECDSA) to the list of known hosts.

Warning: Permanently added '172.20.0.20' (ECDSA) to the list of known hosts.

kubernetes-key.pem 100% 1679 2.2MB/s 00:00

kubernetes.pem 100% 1700 3.6MB/s 00:00

>>> 172.20.0.21

Warning: Permanently added '172.20.0.21' (ECDSA) to the list of known hosts.

Warning: Permanently added '172.20.0.21' (ECDSA) to the list of known hosts.

kubernetes-key.pem 100% 1679 902.7KB/s 00:00

kubernetes.pem 100% 1700 2.1MB/s 00:00

>>> 172.20.0.22

Warning: Permanently added '172.20.0.22' (ECDSA) to the list of known hosts.

Warning: Permanently added '172.20.0.22' (ECDSA) to the list of known hosts.

kubernetes-key.pem 100% 1679 795.6KB/s 00:00

kubernetes.pem 100% 1700 2.2MB/s 00:00

[root@kubernetes-01 work]#

创建加密配置文件

cd /opt/k8s/work

source /opt/k8s/bin/environment.sh

cat > encryption-config.yaml <<EOF

kind: EncryptionConfig

apiVersion: v1

resources:

- resources:

- secrets

providers:

- aescbc:

keys:

- name: key1

secret: ${ENCRYPTION_KEY}

- identity: {}

EOF

将加密配置文件拷贝到所有 master 节点的 /etc/kubernetes 目录下:

cd /opt/k8s/work

source /opt/k8s/bin/environment.sh

for node_ip in ${NODE_IPS[@]}

do

echo ">>> ${node_ip}"

scp encryption-config.yaml root@${node_ip}:/etc/kubernetes/

done

[root@kubernetes-01 work]# source /opt/k8s/bin/environment.sh

[root@kubernetes-01 work]# for node_ip in ${NODE_IPS[@]}

> do

> echo ">>> ${node_ip}"

> scp encryption-config.yaml root@${node_ip}:/etc/kubernetes/

> done

>>> 172.20.0.20

Warning: Permanently added '172.20.0.20' (ECDSA) to the list of known hosts.

encryption-config.yaml 100% 240 273.9KB/s 00:00

>>> 172.20.0.21

Warning: Permanently added '172.20.0.21' (ECDSA) to the list of known hosts.

encryption-config.yaml 100% 240 25.7KB/s 00:00

>>> 172.20.0.22

Warning: Permanently added '172.20.0.22' (ECDSA) to the list of known hosts.

encryption-config.yaml 100% 240 137.7KB/s 00:00

[root@kubernetes-01 work]#

创建审计策略文件

cd /opt/k8s/work

source /opt/k8s/bin/environment.sh

cat > audit-policy.yaml <<EOF

apiVersion: audit.k8s.io/v1beta1

kind: Policy

rules:

# The following requests were manually identified as high-volume and low-risk, so drop them.

- level: None

resources:

- group: ""

resources:

- endpoints

- services

- services/status

users:

- 'system:kube-proxy'

verbs:

- watch

- level: None

resources:

- group: ""

resources:

- nodes

- nodes/status

userGroups:

- 'system:nodes'

verbs:

- get

- level: None

namespaces:

- kube-system

resources:

- group: ""

resources:

- endpoints

users:

- 'system:kube-controller-manager'

- 'system:kube-scheduler'

- 'system:serviceaccount:kube-system:endpoint-controller'

verbs:

- get

- update

- level: None

resources:

- group: ""

resources:

- namespaces

- namespaces/status

- namespaces/finalize

users:

- 'system:apiserver'

verbs:

- get

# Don't log HPA fetching metrics.

- level: None

resources:

- group: metrics.k8s.io

users:

- 'system:kube-controller-manager'

verbs:

- get

- list

# Don't log these read-only URLs.

- level: None

nonResourceURLs:

- '/healthz*'

- /version

- '/swagger*'

# Don't log events requests.

- level: None

resources:

- group: ""

resources:

- events

# node and pod status calls from nodes are high-volume and can be large, don't log responses

# for expected updates from nodes

- level: Request

omitStages:

- RequestReceived

resources:

- group: ""

resources:

- nodes/status

- pods/status

users:

- kubelet

- 'system:node-problem-detector'

- 'system:serviceaccount:kube-system:node-problem-detector'

verbs:

- update

- patch

- level: Request

omitStages:

- RequestReceived

resources:

- group: ""

resources:

- nodes/status

- pods/status

userGroups:

- 'system:nodes'

verbs:

- update

- patch

# deletecollection calls can be large, don't log responses for expected namespace deletions

- level: Request

omitStages:

- RequestReceived

users:

- 'system:serviceaccount:kube-system:namespace-controller'

verbs:

- deletecollection

# Secrets, ConfigMaps, and TokenReviews can contain sensitive & binary data,

# so only log at the Metadata level.

- level: Metadata

omitStages:

- RequestReceived

resources:

- group: ""

resources:

- secrets

- configmaps

- group: authentication.k8s.io

resources:

- tokenreviews

# Get repsonses can be large; skip them.

- level: Request

omitStages:

- RequestReceived

resources:

- group: ""

- group: admissionregistration.k8s.io

- group: apiextensions.k8s.io

- group: apiregistration.k8s.io

- group: apps

- group: authentication.k8s.io

- group: authorization.k8s.io

- group: autoscaling

- group: batch

- group: certificates.k8s.io

- group: extensions

- group: metrics.k8s.io

- group: networking.k8s.io

- group: policy

- group: rbac.authorization.k8s.io

- group: scheduling.k8s.io

- group: settings.k8s.io

- group: storage.k8s.io

verbs:

- get

- list

- watch

# Default level for known APIs

- level: RequestResponse

omitStages:

- RequestReceived

resources:

- group: ""

- group: admissionregistration.k8s.io

- group: apiextensions.k8s.io

- group: apiregistration.k8s.io

- group: apps

- group: authentication.k8s.io

- group: authorization.k8s.io

- group: autoscaling

- group: batch

- group: certificates.k8s.io

- group: extensions

- group: metrics.k8s.io

- group: networking.k8s.io

- group: policy

- group: rbac.authorization.k8s.io

- group: scheduling.k8s.io

- group: settings.k8s.io

- group: storage.k8s.io

# Default level for all other requests.

- level: Metadata

omitStages:

- RequestReceived

EOF

分发审计策略文件:

cd /opt/k8s/work

source /opt/k8s/bin/environment.sh

for node_ip in ${NODE_IPS[@]}

do

echo ">>> ${node_ip}"

scp audit-policy.yaml root@${node_ip}:/etc/kubernetes/audit-policy.yaml

done

[root@kubernetes-01 work]# source /opt/k8s/bin/environment.sh

[root@kubernetes-01 work]# for node_ip in ${NODE_IPS[@]}

> do

> echo ">>> ${node_ip}"

> scp audit-policy.yaml root@${node_ip}:/etc/kubernetes/audit-policy.yaml

> done

>>> 172.20.0.20

Warning: Permanently added '172.20.0.20' (ECDSA) to the list of known hosts.

audit-policy.yaml 100% 4322 4.9MB/s 00:00

>>> 172.20.0.21

Warning: Permanently added '172.20.0.21' (ECDSA) to the list of known hosts.

audit-policy.yaml 100% 4322 3.5MB/s 00:00

>>> 172.20.0.22

Warning: Permanently added '172.20.0.22' (ECDSA) to the list of known hosts.

audit-policy.yaml 100% 4322 2.8MB/s 00:00

[root@kubernetes-01 work]#

创建后续访问 metrics-server 或 kube-prometheus 使用的证书

创建证书签名请求:

cd /opt/k8s/work

cat > proxy-client-csr.json <<EOF

{

"CN": "aggregator",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "k8s",

"OU": "z0ukun"

}

]

}

EOF

- CN 名称需要位于 kube-apiserver 的 –requestheader-allowed-names 参数中,否则后续访问 metrics 时会提示权限不足。

生成证书和私钥:

cfssl gencert -ca=/etc/kubernetes/cert/ca.pem \

-ca-key=/etc/kubernetes/cert/ca-key.pem \

-config=/etc/kubernetes/cert/ca-config.json \

-profile=kubernetes proxy-client-csr.json | cfssljson -bare proxy-client

ls proxy-client*.pem

[root@kubernetes-01 work]# cfssl gencert -ca=/etc/kubernetes/cert/ca.pem \

> -ca-key=/etc/kubernetes/cert/ca-key.pem \

> -config=/etc/kubernetes/cert/ca-config.json \

> -profile=kubernetes proxy-client-csr.json | cfssljson -bare proxy-client

2021/03/31 14:03:13 [INFO] generate received request

2021/03/31 14:03:13 [INFO] received CSR

2021/03/31 14:03:13 [INFO] generating key: rsa-2048

2021/03/31 14:03:13 [INFO] encoded CSR

2021/03/31 14:03:13 [INFO] signed certificate with serial number 519453823699954399345164730015346247955623868178

2021/03/31 14:03:13 [WARNING] This certificate lacks a "hosts" field. This makes it unsuitable for

websites. For more information see the Baseline Requirements for the Issuance and Management

of Publicly-Trusted Certificates, v.1.1.6, from the CA/Browser Forum (https://cabforum.org);

specifically, section 10.2.3 ("Information Requirements").

[root@kubernetes-01 work]# ls proxy-client*.pem

proxy-client-key.pem proxy-client.pem

[root@kubernetes-01 work]#

将生成的证书和私钥文件拷贝到所有 master 节点:

source /opt/k8s/bin/environment.sh

for node_ip in ${NODE_IPS[@]}

do

echo ">>> ${node_ip}"

scp proxy-client*.pem root@${node_ip}:/etc/kubernetes/cert/

done

[root@kubernetes-01 work]# source /opt/k8s/bin/environment.sh

[root@kubernetes-01 work]# for node_ip in ${NODE_IPS[@]}

> do

> echo ">>> ${node_ip}"

> scp proxy-client*.pem root@${node_ip}:/etc/kubernetes/cert/

> done

>>> 172.20.0.20

Warning: Permanently added '172.20.0.20' (ECDSA) to the list of known hosts.

proxy-client-key.pem 100% 1675 2.8MB/s 00:00

proxy-client.pem 100% 1399 3.7MB/s 00:00

>>> 172.20.0.21

Warning: Permanently added '172.20.0.21' (ECDSA) to the list of known hosts.

proxy-client-key.pem 100% 1675 1.7MB/s 00:00

proxy-client.pem 100% 1399 1.9MB/s 00:00

>>> 172.20.0.22

Warning: Permanently added '172.20.0.22' (ECDSA) to the list of known hosts.

proxy-client-key.pem 100% 1675 1.6MB/s 00:00

proxy-client.pem 100% 1399 1.5MB/s 00:00

[root@kubernetes-01 work]#

创建 kube-apiserver systemd unit 模板文件

cd /opt/k8s/work

source /opt/k8s/bin/environment.sh

cat > kube-apiserver.service.template <<EOF

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/GoogleCloudPlatform/kubernetes

After=network.target

[Service]

WorkingDirectory=${K8S_DIR}/kube-apiserver

ExecStart=/opt/k8s/bin/kube-apiserver \\

--advertise-address=##NODE_IP## \\

--default-not-ready-toleration-seconds=360 \\

--default-unreachable-toleration-seconds=360 \\

--max-mutating-requests-inflight=2000 \\

--max-requests-inflight=4000 \\

--default-watch-cache-size=200 \\

--delete-collection-workers=2 \\

--encryption-provider-config=/etc/kubernetes/encryption-config.yaml \\

--etcd-cafile=/etc/kubernetes/cert/ca.pem \\

--etcd-certfile=/etc/kubernetes/cert/kubernetes.pem \\

--etcd-keyfile=/etc/kubernetes/cert/kubernetes-key.pem \\

--etcd-servers=${ETCD_ENDPOINTS} \\

--bind-address=##NODE_IP## \\

--secure-port=6443 \\

--tls-cert-file=/etc/kubernetes/cert/kubernetes.pem \\

--tls-private-key-file=/etc/kubernetes/cert/kubernetes-key.pem \\

--insecure-port=0 \\

--audit-log-maxage=15 \\

--audit-log-maxbackup=3 \\

--audit-log-maxsize=100 \\

--audit-log-truncate-enabled \\

--audit-log-path=${K8S_DIR}/kube-apiserver/audit.log \\

--audit-policy-file=/etc/kubernetes/audit-policy.yaml \\

--profiling \\

--anonymous-auth=false \\

--client-ca-file=/etc/kubernetes/cert/ca.pem \\

--enable-bootstrap-token-auth \\

--requestheader-allowed-names="aggregator" \\

--requestheader-client-ca-file=/etc/kubernetes/cert/ca.pem \\

--requestheader-extra-headers-prefix="X-Remote-Extra-" \\

--requestheader-group-headers=X-Remote-Group \\

--requestheader-username-headers=X-Remote-User \\

--service-account-key-file=/etc/kubernetes/cert/ca.pem \\

--authorization-mode=Node,RBAC \\

--runtime-config=api/all=true \\

--enable-admission-plugins=NodeRestriction \\

--allow-privileged=true \\

--apiserver-count=3 \\

--event-ttl=168h \\

--kubelet-certificate-authority=/etc/kubernetes/cert/ca.pem \\

--kubelet-client-certificate=/etc/kubernetes/cert/kubernetes.pem \\

--kubelet-client-key=/etc/kubernetes/cert/kubernetes-key.pem \\

--kubelet-https=true \\

--kubelet-timeout=10s \\

--proxy-client-cert-file=/etc/kubernetes/cert/proxy-client.pem \\

--proxy-client-key-file=/etc/kubernetes/cert/proxy-client-key.pem \\

--service-cluster-ip-range=${SERVICE_CIDR} \\

--service-node-port-range=${NODE_PORT_RANGE} \\

--logtostderr=true \\

--v=2

Restart=on-failure

RestartSec=10

Type=notify

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF

- –advertise-address:apiserver 对外通告的 IP(kubernetes 服务后端节点 IP);

- –default-*-toleration-seconds:设置节点异常相关的阈值;

- –max-*-requests-inflight:请求相关的最大阈值;

- –etcd-*:访问 etcd 的证书和 etcd 服务器地址;

- –bind-address: https 监听的 IP,不能为 127.0.0.1,否则外界不能访问它的安全端口 6443;

- –secret-port:https 监听端口;

- –insecure-port=0:关闭监听 http 非安全端口(8080);

- –tls-*-file:指定 apiserver 使用的证书、私钥和 CA 文件;

- –audit-*:配置审计策略和审计日志文件相关的参数;

- –client-ca-file:验证 client (kue-controller-manager、kube-scheduler、kubelet、kube-proxy 等)请求所带的证书;

- –enable-bootstrap-token-auth:启用 kubelet bootstrap 的 token 认证;

- –requestheader-*:kube-apiserver 的 aggregator layer 相关的配置参数,proxy-client & HPA 需要使用;

- –requestheader-client-ca-file:用于签名 –proxy-client-cert-file 和 –proxy-client-key-file 指定的证书;在启用了 metric aggregator 时使用;

- –requestheader-allowed-names:不能为空,值为逗号分割的 –proxy-client-cert-file 证书的 CN 名称,这里设置为 “aggregator”;

- –service-account-key-file:签名 ServiceAccount Token 的公钥文件,kube-controller-manager 的 –service-account-private-key-file 指定私钥文件,两者配对使用;

- –runtime-config=api/all=true: 启用所有版本的 APIs,如 autoscaling/v2alpha1;

- –authorization-mode=Node,RBAC、–anonymous-auth=false: 开启 Node 和 RBAC 授权模式,拒绝未授权的请求;

- –enable-admission-plugins:启用一些默认关闭的 plugins;

- –allow-privileged:运行执行 privileged 权限的容器;

- –apiserver-count=3:指定 apiserver 实例的数量;

- –event-ttl:指定 events 的保存时间;

- –kubelet-:如果指定,则使用 https 访问 kubelet APIs;需要为证书对应的用户(上面 kubernetes.pem 证书的用户为 kubernetes) 用户定义 RBAC 规则,否则访问 kubelet API 时提示未授权;

- –proxy-client-*:apiserver 访问 metrics-server 使用的证书;

- –service-cluster-ip-range: 指定 Service Cluster IP 地址段;

- –service-node-port-range: 指定 NodePort 的端口范围;

注:如果 kube-apiserver 机器没有运行 kube-proxy,则还需要添加 –enable-aggregator-routing=true 参数;

关于 –requestheader-XXX 相关参数,参考:

- https://github.com/kubernetes-incubator/apiserver-builder/blob/master/docs/concepts/auth.md

- https://docs.bitnami.com/kubernetes/how-to/configure-autoscaling-custom-metrics/

注: –requestheader-client-ca-file 指定的 CA 证书,必须具有 client auth and server auth; 如果 –requestheader-allowed-names 不为空,且 –proxy-client-cert-file 证书的 CN 名称不在 allowed-names 中,则后续查看 node 或 pods 的 metrics 失败,提示:

为各节点创建和分发 kube-apiserver systemd unit 文件

替换模板文件中的变量,为各节点生成 systemd unit 文件:

cd /opt/k8s/work

source /opt/k8s/bin/environment.sh

for (( i=0; i < 3; i++ ))

do

sed -e "s/##NODE_NAME##/${NODE_NAMES[i]}/" -e "s/##NODE_IP##/${NODE_IPS[i]}/" kube-apiserver.service.template > kube-apiserver-${NODE_IPS[i]}.service

done

ls kube-apiserver*.service

[root@kubernetes-01 work]# source /opt/k8s/bin/environment.sh

[root@kubernetes-01 work]# for (( i=0; i < 3; i++ ))

> do

> sed -e "s/##NODE_NAME##/${NODE_NAMES[i]}/" -e "s/##NODE_IP##/${NODE_IPS[i]}/" kube-apiserver.service.template > kube-apiserver-${NODE_IPS[i]}.service

> done

[root@kubernetes-01 work]# ls kube-apiserver*.service

kube-apiserver-172.20.0.20.service kube-apiserver-172.20.0.21.service kube-apiserver-172.20.0.22.service

[root@kubernetes-01 work]#

- NODE_NAMES 和 NODE_IPS 为相同长度的 bash 数组,分别为节点名称和对应的 IP;

分发生成的 systemd unit 文件:

cd /opt/k8s/work

source /opt/k8s/bin/environment.sh

for node_ip in ${NODE_IPS[@]}

do

echo ">>> ${node_ip}"

scp kube-apiserver-${node_ip}.service root@${node_ip}:/etc/systemd/system/kube-apiserver.service

done

[root@kubernetes-01 work]# source /opt/k8s/bin/environment.sh

[root@kubernetes-01 work]# for node_ip in ${NODE_IPS[@]}

> do

> echo ">>> ${node_ip}"

> scp kube-apiserver-${node_ip}.service root@${node_ip}:/etc/systemd/system/kube-apiserver.service

> done

>>> 172.20.0.20

Warning: Permanently added '172.20.0.20' (ECDSA) to the list of known hosts.

kube-apiserver-172.20.0.20.service 100% 2506 3.6MB/s 00:00

>>> 172.20.0.21

Warning: Permanently added '172.20.0.21' (ECDSA) to the list of known hosts.

kube-apiserver-172.20.0.21.service 100% 2506 2.8MB/s 00:00

>>> 172.20.0.22

Warning: Permanently added '172.20.0.22' (ECDSA) to the list of known hosts.

kube-apiserver-172.20.0.22.service 100% 2506 2.5MB/s 00:00

[root@kubernetes-01 work]#

启动 kube-apiserver 服务

source /opt/k8s/bin/environment.sh

for node_ip in ${NODE_IPS[@]}

do

echo ">>> ${node_ip}"

ssh root@${node_ip} "mkdir -p ${K8S_DIR}/kube-apiserver"

ssh root@${node_ip} "systemctl daemon-reload && systemctl enable kube-apiserver && systemctl restart kube-apiserver"

done

[root@kubernetes-01 work]# source /opt/k8s/bin/environment.sh

[root@kubernetes-01 work]# for node_ip in ${NODE_IPS[@]}

> do

> echo ">>> ${node_ip}"

> ssh root@${node_ip} "mkdir -p ${K8S_DIR}/kube-apiserver"

> ssh root@${node_ip} "systemctl daemon-reload && systemctl enable kube-apiserver && systemctl restart kube-apiserver"

> done

>>> 172.20.0.20

Warning: Permanently added '172.20.0.20' (ECDSA) to the list of known hosts.

Warning: Permanently added '172.20.0.20' (ECDSA) to the list of known hosts.

Created symlink from /etc/systemd/system/multi-user.target.wants/kube-apiserver.service to /etc/systemd/system/kube-apiserver.service.

>>> 172.20.0.21

Warning: Permanently added '172.20.0.21' (ECDSA) to the list of known hosts.

Warning: Permanently added '172.20.0.21' (ECDSA) to the list of known hosts.

Created symlink from /etc/systemd/system/multi-user.target.wants/kube-apiserver.service to /etc/systemd/system/kube-apiserver.service.

>>> 172.20.0.22

Warning: Permanently added '172.20.0.22' (ECDSA) to the list of known hosts.

Warning: Permanently added '172.20.0.22' (ECDSA) to the list of known hosts.

Created symlink from /etc/systemd/system/multi-user.target.wants/kube-apiserver.service to /etc/systemd/system/kube-apiserver.service.

[root@kubernetes-01 work]#

检查 kube-apiserver 运行状态

source /opt/k8s/bin/environment.sh

for node_ip in ${NODE_IPS[@]}

do

echo ">>> ${node_ip}"

ssh root@${node_ip} "systemctl status kube-apiserver |grep 'Active:'"

done

[root@kubernetes-01 work]# source /opt/k8s/bin/environment.sh

[root@kubernetes-01 work]# for node_ip in ${NODE_IPS[@]}

> do

> echo ">>> ${node_ip}"

> ssh root@${node_ip} "systemctl status kube-apiserver |grep 'Active:'"

> done

>>> 172.20.0.20

Warning: Permanently added '172.20.0.20' (ECDSA) to the list of known hosts.

Active: active (running) since Wed 2021-03-31 14:04:30 CST; 15s ago

>>> 172.20.0.21

Warning: Permanently added '172.20.0.21' (ECDSA) to the list of known hosts.

Active: active (running) since Wed 2021-03-31 14:04:35 CST; 10s ago

>>> 172.20.0.22

Warning: Permanently added '172.20.0.22' (ECDSA) to the list of known hosts.

Active: active (running) since Wed 2021-03-31 14:04:40 CST; 5s ago

[root@kubernetes-01 work]#

确保状态为 active (running),否则可以通过 journalctl -u kube-apiserver 查看日志,确认原因。

检查集群状态

# 查看集群信息

[root@kubernetes-01 work]# kubectl cluster-info

Kubernetes master is running at https://172.20.0.20:6443

To further debug and diagnose cluster problems, use 'kubectl cluster-info dump'.

[root@kubernetes-01 work]#

# 查看所有的命令空间

[root@kubernetes-01 work]# kubectl get all --all-namespaces

NAMESPACE NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

default service/kubernetes ClusterIP 10.254.0.1 <none> 443/TCP 2m6s

[root@kubernetes-01 work]#

# 查看组件状态

[root@kubernetes-01 work]# kubectl get componentstatuses

Warning: v1 ComponentStatus is deprecated in v1.19+

NAME STATUS MESSAGE ERROR

controller-manager Unhealthy Get "http://127.0.0.1:10252/healthz": dial tcp 127.0.0.1:10252: connect: connection refused

scheduler Unhealthy Get "http://127.0.0.1:10251/healthz": dial tcp 127.0.0.1:10251: connect: connection refused

etcd-0 Healthy {"health":"true"}

etcd-1 Healthy {"health":"true"}

etcd-2 Healthy {"health":"true"}

[root@kubernetes-01 work]#

参考文章:https://zhongpan.tech/2019/10/30/018-output-change-kubectl-get-cs/

Github Issue:https://github.com/kubernetes/kubernetes/issues/83024

检查 kube-apiserver 监听的端口

[root@kubernetes-01 work]# sudo netstat -lnpt|grep kube

tcp 0 0 172.20.0.20:6443 0.0.0.0:* LISTEN 3679/kube-apiserver

[root@kubernetes-01 work]#

- 6443: 接收 https 请求的安全端口,对所有请求做认证和授权;

- 由于关闭了非安全端口,故没有监听 8080;

6.2、Controller-Manager集群

本小节介绍部署高可用 kube-controller-manager 集群的步骤。该集群包含 3 个节点,启动后将通过竞争选举机制产生一个 leader 节点,其它节点为阻塞状态。当 leader 节点不可用时,阻塞的节点将再次进行选举产生新的 leader 节点,从而保证服务的可用性。

为保证通信安全,本文档先生成 x509 证书和私钥,kube-controller-manager 在如下两种情况下使用该证书:

- 与 kube-apiserver 的安全端口通信;

- 在安全端口(https,10252) 输出 prometheus 格式的 metrics;

注:如果没有特殊指明,本小节的所有操作均在 Kubernetes-01 节点上执行。

创建 kube-controller-manager 证书和私钥

创建证书签名请求:

cd /opt/k8s/work

cat > kube-controller-manager-csr.json <<EOF

{

"CN": "system:kube-controller-manager",

"key": {

"algo": "rsa",

"size": 2048

},

"hosts": [

"127.0.0.1",

"172.20.0.20",

"172.20.0.21",

"172.20.0.22"

],

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "system:kube-controller-manager",

"OU": "z0ukun"

}

]

}

EOF

- hosts 列表包含所有 kube-controller-manager 节点 IP;

- CN 和 O 均为 system:kube-controller-manager,kubernetes 内置的 ClusterRoleBindings system:kube-controller-manager 赋予 kube-controller-manager 工作所需的权限。

生成证书和私钥:

cd /opt/k8s/work

cfssl gencert -ca=/opt/k8s/work/ca.pem \

-ca-key=/opt/k8s/work/ca-key.pem \

-config=/opt/k8s/work/ca-config.json \

-profile=kubernetes kube-controller-manager-csr.json | cfssljson -bare kube-controller-manager

ls kube-controller-manager*pem

[root@kubernetes-01 work]# cfssl gencert -ca=/opt/k8s/work/ca.pem \

> -ca-key=/opt/k8s/work/ca-key.pem \

> -config=/opt/k8s/work/ca-config.json \

> -profile=kubernetes kube-controller-manager-csr.json | cfssljson -bare kube-controller-manager

2021/03/31 14:08:34 [INFO] generate received request

2021/03/31 14:08:34 [INFO] received CSR

2021/03/31 14:08:34 [INFO] generating key: rsa-2048

2021/03/31 14:08:35 [INFO] encoded CSR

2021/03/31 14:08:35 [INFO] signed certificate with serial number 167734461365167531618854597958116803518757833256

[root@kubernetes-01 work]# ls kube-controller-manager*pem

kube-controller-manager-key.pem kube-controller-manager.pem

[root@kubernetes-01 work]#

将生成的证书和私钥分发到所有 master 节点:

cd /opt/k8s/work

source /opt/k8s/bin/environment.sh

for node_ip in ${NODE_IPS[@]}

do

echo ">>> ${node_ip}"

scp kube-controller-manager*.pem root@${node_ip}:/etc/kubernetes/cert/

done

[root@kubernetes-01 work]# source /opt/k8s/bin/environment.sh

[root@kubernetes-01 work]# for node_ip in ${NODE_IPS[@]}

> do

> echo ">>> ${node_ip}"

> scp kube-controller-manager*.pem root@${node_ip}:/etc/kubernetes/cert/

> done

>>> 172.20.0.20

Warning: Permanently added '172.20.0.20' (ECDSA) to the list of known hosts.

kube-controller-manager-key.pem 100% 1675 1.9MB/s 00:00

kube-controller-manager.pem 100% 1513 2.6MB/s 00:00

>>> 172.20.0.21

Warning: Permanently added '172.20.0.21' (ECDSA) to the list of known hosts.

kube-controller-manager-key.pem 100% 1675 1.8MB/s 00:00

kube-controller-manager.pem 100% 1513 2.0MB/s 00:00

>>> 172.20.0.22

Warning: Permanently added '172.20.0.22' (ECDSA) to the list of known hosts.

kube-controller-manager-key.pem 100% 1675 1.4MB/s 00:00

kube-controller-manager.pem 100% 1513 1.9MB/s 00:00

[root@kubernetes-01 work]#

创建和分发 kubeconfig 文件

kube-controller-manager 使用 kubeconfig 文件访问 apiserver,该文件提供了 apiserver 地址、嵌入的 CA 证书和 kube-controller-manager 证书等信息:

cd /opt/k8s/work

source /opt/k8s/bin/environment.sh

kubectl config set-cluster kubernetes \

--certificate-authority=/opt/k8s/work/ca.pem \

--embed-certs=true \

--server="https://##NODE_IP##:6443" \

--kubeconfig=kube-controller-manager.kubeconfig

kubectl config set-credentials system:kube-controller-manager \

--client-certificate=kube-controller-manager.pem \

--client-key=kube-controller-manager-key.pem \

--embed-certs=true \

--kubeconfig=kube-controller-manager.kubeconfig

kubectl config set-context system:kube-controller-manager \

--cluster=kubernetes \

--user=system:kube-controller-manager \

--kubeconfig=kube-controller-manager.kubeconfig

kubectl config use-context system:kube-controller-manager --kubeconfig=kube-controller-manager.kubeconfig

- kube-controller-manager 与 kube-apiserver 混布,故直接通过节点 IP 访问 kube-apiserver。

分发 kubeconfig 到所有 master 节点:

cd /opt/k8s/work

source /opt/k8s/bin/environment.sh

for node_ip in ${NODE_IPS[@]}

do

echo ">>> ${node_ip}"

sed -e "s/##NODE_IP##/${node_ip}/" kube-controller-manager.kubeconfig > kube-controller-manager-${node_ip}.kubeconfig

scp kube-controller-manager-${node_ip}.kubeconfig root@${node_ip}:/etc/kubernetes/kube-controller-manager.kubeconfig

done

[root@kubernetes-01 work]# source /opt/k8s/bin/environment.sh

[root@kubernetes-01 work]# for node_ip in ${NODE_IPS[@]}

> do

> echo ">>> ${node_ip}"

> sed -e "s/##NODE_IP##/${node_ip}/" kube-controller-manager.kubeconfig > kube-controller-manager-${node_ip}.kubeconfig

> scp kube-controller-manager-${node_ip}.kubeconfig root@${node_ip}:/etc/kubernetes/kube-controller-manager.kubeconfig

> done

>>> 172.20.0.20

Warning: Permanently added '172.20.0.20' (ECDSA) to the list of known hosts.

kube-controller-manager-172.20.0.20.kubeconfig 100% 6453 8.1MB/s 00:00

>>> 172.20.0.21

Warning: Permanently added '172.20.0.21' (ECDSA) to the list of known hosts.

kube-controller-manager-172.20.0.21.kubeconfig 100% 6453 5.1MB/s 00:00

>>> 172.20.0.22

Warning: Permanently added '172.20.0.22' (ECDSA) to the list of known hosts.

kube-controller-manager-172.20.0.22.kubeconfig 100% 6453 5.2MB/s 00:00

[root@kubernetes-01 work]#

创建 kube-controller-manager systemd unit 模板文件

cd /opt/k8s/work

source /opt/k8s/bin/environment.sh

cat > kube-controller-manager.service.template <<EOF

[Unit]

Description=Kubernetes Controller Manager

Documentation=https://github.com/GoogleCloudPlatform/kubernetes

[Service]

WorkingDirectory=${K8S_DIR}/kube-controller-manager

ExecStart=/opt/k8s/bin/kube-controller-manager \\

--profiling \\

--cluster-name=kubernetes \\

--controllers=*,bootstrapsigner,tokencleaner \\

--kube-api-qps=1000 \\

--kube-api-burst=2000 \\

--leader-elect \\

--use-service-account-credentials\\

--concurrent-service-syncs=2 \\

--bind-address=##NODE_IP## \\

--secure-port=10252 \\

--tls-cert-file=/etc/kubernetes/cert/kube-controller-manager.pem \\

--tls-private-key-file=/etc/kubernetes/cert/kube-controller-manager-key.pem \\

--port=0 \\

--authentication-kubeconfig=/etc/kubernetes/kube-controller-manager.kubeconfig \\

--client-ca-file=/etc/kubernetes/cert/ca.pem \\

--requestheader-allowed-names="aggregator" \\

--requestheader-client-ca-file=/etc/kubernetes/cert/ca.pem \\

--requestheader-extra-headers-prefix="X-Remote-Extra-" \\

--requestheader-group-headers=X-Remote-Group \\

--requestheader-username-headers=X-Remote-User \\

--authorization-kubeconfig=/etc/kubernetes/kube-controller-manager.kubeconfig \\

--cluster-signing-cert-file=/etc/kubernetes/cert/ca.pem \\

--cluster-signing-key-file=/etc/kubernetes/cert/ca-key.pem \\

--experimental-cluster-signing-duration=876000h \\

--horizontal-pod-autoscaler-sync-period=10s \\

--concurrent-deployment-syncs=10 \\

--concurrent-gc-syncs=30 \\

--node-cidr-mask-size=24 \\

--service-cluster-ip-range=${SERVICE_CIDR} \\

--pod-eviction-timeout=6m \\

--terminated-pod-gc-threshold=10000 \\

--root-ca-file=/etc/kubernetes/cert/ca.pem \\

--service-account-private-key-file=/etc/kubernetes/cert/ca-key.pem \\

--kubeconfig=/etc/kubernetes/kube-controller-manager.kubeconfig \\

--logtostderr=true \\

--v=2

Restart=on-failure

RestartSec=5

[Install]

WantedBy=multi-user.target

EOF

- –port=0:关闭监听非安全端口(http),同时 –address 参数无效,–bind-address 参数有效;

- –secure-port=10252、–bind-address=0.0.0.0: 在所有网络接口监听 10252 端口的 https /metrics 请求;

- –kubeconfig:指定 kubeconfig 文件路径,kube-controller-manager 使用它连接和验证 kube-apiserver;

- –authentication-kubeconfig 和 –authorization-kubeconfig:kube-controller-manager 使用它连接 apiserver,对 client 的请求进行认证和授权。kube-controller-manager 不再使用 –tls-ca-file 对请求 https metrics 的 Client 证书进行校验。如果没有配置这两个 kubeconfig 参数,则 client 连接 kube-controller-manager https 端口的请求会被拒绝(提示权限不足)。

- –cluster-signing-*-file:签名 TLS Bootstrap 创建的证书;

- –experimental-cluster-signing-duration:指定 TLS Bootstrap 证书的有效期;

- –root-ca-file:放置到容器 ServiceAccount 中的 CA 证书,用来对 kube-apiserver 的证书进行校验;

- –service-account-private-key-file:签名 ServiceAccount 中 Token 的私钥文件,必须和 kube-apiserver 的 –service-account-key-file 指定的公钥文件配对使用;

- –service-cluster-ip-range :指定 Service Cluster IP 网段,必须和 kube-apiserver 中的同名参数一致;

- –leader-elect=true:集群运行模式,启用选举功能;被选为 leader 的节点负责处理工作,其它节点为阻塞状态;

- –controllers=*,bootstrapsigner,tokencleaner:启用的控制器列表,tokencleaner 用于自动清理过期的 Bootstrap token;

- –horizontal-pod-autoscaler-*:custom metrics 相关参数,支持 autoscaling/v2alpha1; –tls-cert-file、

- –tls-private-key-file:使用 https 输出 metrics 时使用的 Server 证书和秘钥;

- –use-service-account-credentials=true: kube-controller-manager 中各 controller 使用 serviceaccount 访问 kube-apiserver;

为各节点创建和分发 kube-controller-mananger systemd unit 文件

替换模板文件中的变量,为各节点创建 systemd unit 文件:

cd /opt/k8s/work

source /opt/k8s/bin/environment.sh

for (( i=0; i < 3; i++ ))

do

sed -e "s/##NODE_NAME##/${NODE_NAMES[i]}/" -e "s/##NODE_IP##/${NODE_IPS[i]}/" kube-controller-manager.service.template > kube-controller-manager-${NODE_IPS[i]}.service

done

ls kube-controller-manager*.service

[root@kubernetes-01 work]# source /opt/k8s/bin/environment.sh

[root@kubernetes-01 work]# for (( i=0; i < 3; i++ ))

> do

> sed -e "s/##NODE_NAME##/${NODE_NAMES[i]}/" -e "s/##NODE_IP##/${NODE_IPS[i]}/" kube-controller-manager.service.template > kube-controller-manager-${NODE_IPS[i]}.service

> done

[root@kubernetes-01 work]# ls kube-controller-manager*.service

kube-controller-manager-172.20.0.20.service kube-controller-manager-172.20.0.21.service kube-controller-manager-172.20.0.22.service

[root@kubernetes-01 work]#

分发到所有 master 节点:

cd /opt/k8s/work

source /opt/k8s/bin/environment.sh

for node_ip in ${NODE_IPS[@]}

do

echo ">>> ${node_ip}"

scp kube-controller-manager-${node_ip}.service root@${node_ip}:/etc/systemd/system/kube-controller-manager.service

done

[root@kubernetes-01 work]# source /opt/k8s/bin/environment.sh

[root@kubernetes-01 work]# for node_ip in ${NODE_IPS[@]}

> do

> echo ">>> ${node_ip}"

> scp kube-controller-manager-${node_ip}.service root@${node_ip}:/etc/systemd/system/kube-controller-manager.service

> done

>>> 172.20.0.20

Warning: Permanently added '172.20.0.20' (ECDSA) to the list of known hosts.

kube-controller-manager-172.20.0.20.service 100% 1882 1.9MB/s 00:00

>>> 172.20.0.21

Warning: Permanently added '172.20.0.21' (ECDSA) to the list of known hosts.

kube-controller-manager-172.20.0.21.service 100% 1882 1.8MB/s 00:00

>>> 172.20.0.22

Warning: Permanently added '172.20.0.22' (ECDSA) to the list of known hosts.

kube-controller-manager-172.20.0.22.service 100% 1882 1.6MB/s 00:00

[root@kubernetes-01 work]#

启动 kube-controller-manager 服务

source /opt/k8s/bin/environment.sh

for node_ip in ${NODE_IPS[@]}

do

echo ">>> ${node_ip}"

ssh root@${node_ip} "mkdir -p ${K8S_DIR}/kube-controller-manager"

ssh root@${node_ip} "systemctl daemon-reload && systemctl enable kube-controller-manager && systemctl restart kube-controller-manager"

done

[root@kubernetes-01 work]# source /opt/k8s/bin/environment.sh

[root@kubernetes-01 work]# for node_ip in ${NODE_IPS[@]}

> do

> echo ">>> ${node_ip}"

> ssh root@${node_ip} "mkdir -p ${K8S_DIR}/kube-controller-manager"

> ssh root@${node_ip} "systemctl daemon-reload && systemctl enable kube-controller-manager && systemctl restart kube-controller-manager"

> done

>>> 172.20.0.20

Warning: Permanently added '172.20.0.20' (ECDSA) to the list of known hosts.

Warning: Permanently added '172.20.0.20' (ECDSA) to the list of known hosts.

Created symlink from /etc/systemd/system/multi-user.target.wants/kube-controller-manager.service to /etc/systemd/system/kube-controller-manager.service.

>>> 172.20.0.21